AI in Indigenous Communities: Challenges and Solutions

Artificial intelligence (AI) has the potential to support Indigenous communities in areas like language preservation, healthcare, and resource management. However, significant barriers exist, including data biases, lack of infrastructure, and risks to sovereignty. Without Indigenous leadership, AI can perpetuate "digital colonialism", where data is used without consent, eroding self-determination.

Key Takeaways:

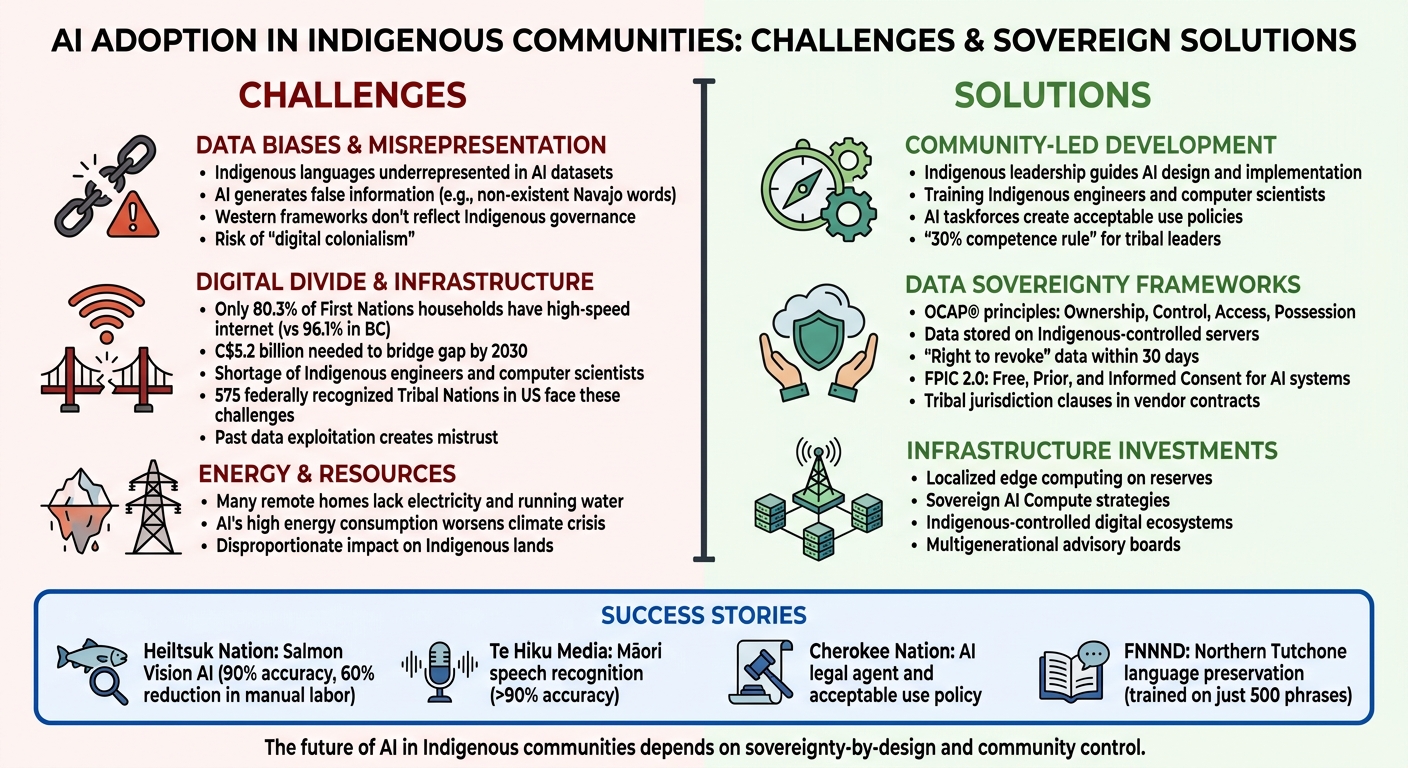

- Challenges: Limited representation of Indigenous languages in AI, digital divides, and risks of data misuse.

- Solutions: Community-led AI development, data sovereignty frameworks (e.g., OCAP® principles), and investments in localized infrastructure.

- Success Stories: The Heiltsuk Nation‘s salmon management AI and Māori-led speech recognition models demonstrate the importance of Indigenous control.

AI’s future in Indigenous communities depends on respecting sovereignty, ensuring ethical data use, and prioritizing local leadership in technology development.

Challenges vs Solutions for AI Adoption in Indigenous Communities

Treating data like land – data sovereignty in the AI age

sbb-itb-fd1fcab

Challenges in AI Adoption for Indigenous Communities

Indigenous communities face a range of obstacles when it comes to adopting AI technologies. These challenges go far beyond technical hurdles – they touch on sovereignty, representation, and the risk of repeating historical injustices through digital means.

Data Biases and Misrepresentation

AI systems often rely on datasets that overwhelmingly favour dominant, Western languages, leaving Indigenous languages underrepresented. A clear example occurred in February 2026, when a fluent Navajo speaker tested ChatGPT and found it generated non-existent words and incorrect pronunciations in Navajo. Similarly, an AI-generated book featuring inaccuracies in Ojibwe verbs was sold on Amazon. Laurie McLeod-Shabogesic, the Anishinaabemowin Coordinator for the Anishinabek Nation, highlighted the harm caused by such errors:

"Inaccurate resources such as [the AI-generated book] not only hurt learners who later have to unlearn this misinformation, but the resources also compromise the long-term integrity of our languages".

When AI models don’t have enough training data, they can produce false or misleading information, which can harm cultural preservation efforts.

Natiea Vinson, CEO of the First Nations Technology Council, described the broader issue:

"AI systems are built on Western frameworks that do not necessarily reflect or serve Indigenous community needs. These systems continue the erasure of Indigenous knowledge, values, and governance models".

The problem extends beyond language. Many AI systems fail to consider Indigenous governance structures, like consensus-based decision-making or clan systems, instead imposing hierarchical norms. This approach not only undermines cultural identity but also contributes to "digital colonialism", where Indigenous data is extracted to train commercial algorithms without consent or benefit to the community.

Digital Divide and Infrastructure Gaps

Technical limitations compound the challenges. Many Indigenous communities lack the infrastructure needed for full participation in AI development. For instance, only 80.3% of First Nations households on reserves and Modern Treaty Nation lands have access to high-speed internet, compared to 96.1% in British Columbia. Bridging this gap by 2030 is estimated to require a C$5.2 billion investment.

The lack of Indigenous engineers and computer scientists further complicates efforts to create AI systems tailored to community needs. Michael Running Wolf, co-founder of Lakota AI Code Camp, underscored the importance of building local expertise:

"We need to have our own engineers. We need to have our own computer scientists using the software… We need to have sovereignty over our own data".

Digital sovereignty is also hindered by the absence of Tribal authority over physical infrastructure, such as communication networks and wireless spectrum, within their territories. While public AI models are accessible, they pose risks of exposing sensitive cultural data. Developing secure, proprietary AI models to protect this data requires significant investment and technical expertise. For the 575 federally recognised Tribal Nations in the United States alone, addressing these issues poses a substantial challenge.

Past exploitation of Indigenous data adds another layer of mistrust. For example, the Standing Rock Sioux Tribe faced a legal dispute with the Lakota Language Consortium over language materials recorded from community elders. Similarly, the Havasupai Tribe discovered that blood samples collected for diabetes research were later used for unrelated genetic studies without consent. These breaches of trust fuel hesitation around AI partnerships.

In addition to connectivity and skills shortages, energy and resource limitations further complicate AI adoption in Indigenous communities.

Energy and Resource Concerns

The energy demands of AI technologies present another hurdle. Many remote Indigenous homes still lack basic utilities like electricity and running water, making it difficult to support the infrastructure required for AI deployment. Moreover, AI’s high energy consumption contributes to the climate crisis, which disproportionately affects Indigenous lands and traditional ways of life.

However, AI also holds promise. In February 2026, for example, the Yakama Nation communities benefited from IrrigOpt AI, a platform providing hyperlocal irrigation guidance. This tool helped farmers cut costs, reduce water waste, and address drought conditions. To mitigate AI’s environmental impact, localised infrastructure is essential. By moving AI processing to edge computing centres located on reserves, communities could reduce reliance on large, energy-intensive data centres while maintaining control over their data. Indigenous leaders are advocating for national "Sovereign AI Compute" strategies to include resources specifically allocated to Indigenous governments to support sustainable, localised computing capacity.

As Natiea Vinson succinctly put it:

"Indigenous Peoples recognize AI’s potential – from language and cultural revitalization to streamlined business operations – but face distinct, systemic barriers to accessing and benefiting from these tools".

These interconnected challenges highlight the importance of community-driven solutions, which could unlock meaningful applications of AI for Indigenous communities.

AI Applications in Indigenous Contexts

AI has shown potential to make a meaningful impact when developed in partnership with Indigenous communities. The difference lies in ensuring these technologies remain under the control of the communities they are intended to serve.

Language Preservation and Teaching

AI is proving to be a valuable tool for documenting and teaching Indigenous languages, especially in communities with few fluent speakers. For example, in September 2025, the First Nation of Na-Cho Nyäk Dun (FNNND) collaborated with Carleton University and the Office of the Commissioner of Indigenous Languages to launch the "Kwän Dék’án’ Do" (To Keep The Fire Burning) initiative. This project uses AI to create a digital model of the Northern Tutchone language, supporting vocabulary development and AI-driven conversations. It also employs 3D scanning to build a unified digital archive. Teresa Samson, Manager of Heritage and Culture at FNNND, highlighted the urgency of this work:

"Our Northern Tutchone language has not died, but with less than a dozen language holders left with us, the embers of our language require tending".

One of the challenges in developing AI for language preservation is the vast amount of data typically required. While English AI systems might need 50,000 hours of audio data for speech recognition, researchers in this project trained AI on just 500 community-defined phrases, making it highly tailored to local needs.

AI, however, is seen as a tool, not a replacement for human connection. Michael Running Wolf, co-founder of Lakota AI Code Camp, emphasized:

"It’s just going to be like a pencil. It’s useful but it’s not going to save our language".

To address infrastructure challenges, portable, offline AI devices have been developed. These allow voice-based language learning in remote areas without internet access, while maintaining data sovereignty. Such "closed-system" tools ensure outputs are accurate and culturally aligned, avoiding issues like AI-generated inaccuracies or inappropriate content.

Healthcare and Elder Support

AI is also making strides in healthcare, addressing critical needs in Indigenous communities. From improving administrative processes to enhancing diagnostic tools, AI is helping to tackle long-standing challenges. Kennedy Satterfield of the American Indian Policy Institute noted that AI can simplify administration, expand access to services, and reduce costs through predictive analytics and automation.

For elders, deep learning systems are being used to predict falls, promoting safety and independence. AI diagnostic tools are also being developed for conditions like ear diseases and diabetic retinopathy, which are prevalent in many Indigenous communities.

Privacy-focused methods like Federated Learning are enabling the inclusion of Indigenous data in genomics research without compromising data sovereignty. This approach supports more precise healthcare tailored to Indigenous patients’ needs while respecting their control over their data. However, these tools must be designed through a sovereignty lens, ensuring they align with cultural values and practices.

Al Kuslikis of the American Indian Higher Education Consortium summed up the vision for Indigenous AI:

"Indigenous AI [is] a form of Regenerative AI that must, by definition, be locally driven. Tribal communities are challenged to develop their own roadmap for designing and deploying AI systems that facilitate a local culturally framed knowledge system".

This approach also extends to environmental care and land management.

Resource and Land Management

AI is helping Indigenous communities manage their lands and resources in ways that respect traditional knowledge. For example, in October 2023, the Heiltsuk Nation partnered with the Wild Salmon Center and Simon Fraser University to launch the "Salmon Vision" AI tool on the Koeye River in British Columbia. This tool, led by William Housty and scientist Will Atlas, uses deep learning to identify salmon species with 90% accuracy.

The system provides real-time data, helping the Heiltsuk Nation manage sockeye stocks more effectively. It has also reduced the manual labour needed for video review by 60%. Housty explained:

"It’s really an extension of title and rights over the ability to manage salmon systems and allows us to develop management plans that are based on real data and with minimal error".

Trained on over 500,000 video frames, the AI can recognize and count 12 fish species found in the Pacific Northwest. By automating routine tasks, field technicians can focus on higher-priority conservation projects.

Similarly, the Wororra people in Australia’s Kimberley region have used generative AI to process decades of archival materials. Led by Traditional Owner Francis Woolagoodja and researcher Elizabeth Vaughan, this project deciphers difficult handwriting from 1930s field notebooks and cross-references genealogies. What once took months of manual work now takes hours, creating a verified dataset for land governance and native title heritage records. Woolagoodja and Vaughan remarked:

"The intent is not to replace oral tradition but to give communities a way to interact with their heritage through dialogue using AI. That’s closer to how this knowledge was always meant to be used than any library shelf".

These examples illustrate how AI, when guided by Indigenous leadership, can complement traditional stewardship practices. From revitalizing languages to improving healthcare and managing resources, AI tools tailored to community needs empower Indigenous groups to shape their own digital futures.

Solutions and Best Practices for Ethical AI Adoption

When it comes to adopting AI within Indigenous communities, the focus must remain on approaches that respect sovereignty, uphold cultural values, and place control firmly in the hands of the communities themselves. Here are some strategies that have shown success in ensuring AI serves Indigenous interests rather than exploiting them.

Community-Led AI Development

AI initiatives must be guided by Indigenous leadership. A great example of this is the Cherokee Nation of Oklahoma, which, in September 2025, formed an AI taskforce to create an "AI Acceptable Use Policy." This policy was built on three core principles: resourcefulness, protection, and outreach. Their 59-member IT team even developed an AI legal agent to streamline legal resources.

Natiea Vinson, CEO of the First Nations Technology Council, put it best:

"AI has the potential to support Nation-building, governance, language preservation, and economic development, but only when First Nations leadership guides its design and implementation".

Another example comes from the Morongo Band of Mission Indians, which introduced a "30% competence rule" in 2025. This rule requires tribal leaders to meet a baseline level of technical knowledge before overseeing AI systems. Similarly, Michael Running Wolf, co-founder of the Lakota AI Code Camp, has emphasized the importance of training Indigenous engineers and computer scientists to design and manage systems locally:

"We have to have our own engineers. We need to have our own computer scientists using the software… We need to have sovereignty over our own data".

In August 2024, Running Wolf launched a summer program to teach Indigenous students app development that integrates Indigenous knowledge. Initiatives like these show the importance of community-driven development as a foundation for ethical AI use.

Data Sovereignty and Ethical Frameworks

At the heart of ethical AI adoption lies data sovereignty. Before engaging with AI vendors, communities must establish clear guidelines about data ownership and access. The OCAP® principles – Ownership, Control, Access, and Possession – are a cornerstone for ensuring data remains a collective community resource rather than falling into individual or corporate hands.

One notable example is Heritage Lab’s Data Sovereignty Policy, introduced in February 2026. This policy mandates that all data be stored on Indigenous-controlled servers in Kahnawà:ke, Québec, through a partnership with Mohawk Internet Technologies. It also includes a "right to revoke", allowing for the permanent deletion of community data within 30 days upon request.

Contracts with AI vendors must also protect tribal jurisdiction over systems impacting the community. Additionally, sacred or ceremonial data should be excluded from general AI model training through enforceable agreements that include audit rights.

Another emerging practice is extending Free, Prior, and Informed Consent (FPIC) to AI systems – a concept known as "FPIC 2.0." This approach involves elders, language experts, and youth councils in decisions about AI systems used in health, education, or social services. By doing so, AI systems align with community values and respect cultural protocols.

Te Hiku Media, a Māori-led organisation in New Zealand, has shown how effective this can be. By maintaining control over design and data while partnering with NVIDIA, they developed a te reo Māori speech recognition model with over 90% accuracy – all while ensuring the data remained under Māori ownership. These efforts not only protect data but also actively resist digital colonialism by securing Indigenous oversight at every stage.

Infrastructure Investments and Capacity Building

To sustain ethical AI adoption, investments in infrastructure and capacity are equally important. Closing the digital divide requires not only better physical infrastructure but also a focus on building expertise. Tribal digital sovereignty goes beyond hardware – it includes control over digital ecosystems, wireless spectrum, and software.

Communities should prioritize Indigenous-controlled infrastructure, such as storing data on servers located within their territories. This ensures protection from external legal systems or corporate takeovers.

Capacity building, meanwhile, must go beyond basic training. The Cherokee Nation, for instance, has demonstrated the importance of dedicated AI champions – staff members who act as long-term stewards and accountability anchors for AI initiatives. Multigenerational advisory boards, including experts in health care, education, and cultural preservation, can also play a critical role in ensuring AI aligns with community priorities.

| Governance Domain | Key Action | Implementation Example |

|---|---|---|

| Data Governance | Inventory and classify data by sensitivity | Separate sacred/ceremonial data from other records |

| Vendor Relations | Include tribal law clauses in contracts | Require vendors to disclose data training practices |

| Community Impact | Engage elders and youth councils | Implement FPIC 2.0 before deploying systems |

| Infrastructure | Store data on Indigenous-controlled servers | Partner with Indigenous technology providers |

| Workforce | Designate an Internal AI Champion | Fund professional development for key staff |

For workflows involving sensitive data, such as health or membership records, communities should consider rule-based automation instead of generative AI. Deterministic systems, which operate on explicit rules defined by the community, can help avoid risks like data leaks or AI "hallucinations".

These strategies highlight how ethical AI adoption is possible when Indigenous communities maintain control over technology, data, and decision-making processes. By doing so, they ensure AI serves as a tool for empowerment rather than exploitation.

Role of Custom Software in Addressing Challenges

Custom software plays a vital role in empowering Indigenous communities to achieve digital self-determination. Instead of forcing communities to conform to rigid, pre-existing templates, these systems are designed to reflect their unique governance structures and data protection needs. The real power of custom solutions lies in who controls the design, where the data is stored, and whether the technology aligns with community priorities rather than external interests. These tailored systems build on the ethical AI practices and data sovereignty strategies discussed earlier.

Custom AI Solutions for Indigenous Communities

For tasks involving sensitive data – like membership records, health claims, or education funding – rule-based automation is a game-changer. Unlike generative AI, which can produce unpredictable results, these systems operate on clearly defined, deterministic rules set by the community. This ensures consistent outputs for the same inputs, eliminating risks like "hallucinations" or unauthorized data learning.

Language preservation is another area where custom AI solutions shine. By training models on smaller, community-specific datasets, these tools make digital language resources accessible even for communities with limited recorded materials. Instead of relying on probabilistic methods that scrape data from the open internet, these systems use curated, community-approved content. This greatly reduces the risk of fabricated or misrepresented language.

Workflow Automation and CRM Integration

Custom software can significantly reduce administrative burdens, freeing up time for more community-focused work. Automated systems streamline tasks like invoice processing, federal reporting for ISC and CIRNAC, and program management – reducing what used to take days to mere hours. For instance, in the Post-Secondary Student Support Program (PSSSP), automation handles application intake through OCR scanning, checks eligibility, and prioritizes rankings, allowing Education Coordinators to focus on supporting students.

Custom CRM systems also enforce membership codes and track lineage with full audit trails. These systems ensure compliance with OCAP principles – Ownership, Control, Access, and Possession – by keeping data exclusively within Canadian borders, avoiding exposure to foreign surveillance laws like the US CLOUD Act.

Scalable Solutions for Different Community Needs

Scalability is another strength of custom software. Modular automation allows these systems to integrate with existing legacy platforms, such as Xyntax or Sage, without requiring costly overhauls. In remote areas with unreliable internet, offline functionality ensures local operations can continue uninterrupted, with data syncing once connectivity is restored.

Before implementation, it’s critical to classify data by sensitivity. Membership records and cultural knowledge, for example, demand higher protection levels compared to general administrative data.

One example of these principles in action is Digital Fractal Technologies Inc. This company specializes in custom software development and AI consulting tailored to meet these specific needs. Their services include workflow automation, custom CRM systems, and purpose-built applications, all designed to keep control firmly in the hands of the community while adhering to Canadian data residency standards.

Conclusion

AI holds potential for empowering Indigenous communities, particularly in areas like language preservation, governance, and healthcare. However, this potential comes with a serious risk – digital colonialism. When control over design, data, and decision-making is taken away, it can lead to the exploitation of Indigenous knowledge and resources.

To address these challenges, a shift in perspective is essential. Data needs to be treated as "kin" – not as a resource to exploit, but as a living connection to people, histories, and sacred traditions. This means moving beyond token consultation and ensuring Indigenous Peoples take on roles as designers, engineers, and decision-makers in AI technology development. Technology itself is merely a tool; its value depends entirely on how and by whom it is used.

The foundation of AI initiatives must be Tribal Digital Sovereignty, guided by OCAP principles (ownership, control, access, and possession) and supported by investments in digital infrastructure to bridge the existing technological gaps. Without these protections, AI risks becoming yet another mechanism of colonization, undermining Indigenous autonomy and misrepresenting traditional knowledge.

There are already promising examples of what is possible. The Cherokee Nation’s AI legal agent and Te Hiku Media’s Māori speech model demonstrate the power of community-led AI projects. These successes highlight a critical truth: community control is not optional – it is indispensable.

The future of AI in Indigenous communities must prioritize sovereignty-by-design. When Indigenous communities lead the way, technology can truly serve their needs and honour their traditions.

FAQs

What does data sovereignty mean for AI in Indigenous communities?

Data sovereignty in Indigenous communities is about their right to oversee and govern their own data. This includes everything from cultural and linguistic information to personal data. It’s a way to ensure they maintain ownership and control, preventing misuse or exploitation of their knowledge and resources.

By asserting this sovereignty, communities can ensure that AI development respects their values and traditions. It helps protect cultural knowledge, avoid the risks of digital colonization, and supports ethical AI practices. Ultimately, it’s a step toward achieving digital self-determination.

How can a Nation use AI without exposing sacred or sensitive data?

A nation can protect its sacred or sensitive information by focusing on data sovereignty and ensuring communities have control over their data. This means adopting ethical practices, establishing governance led by the community, and involving those communities directly in decisions about how their data is used.

By building AI systems that are developed and managed locally – rather than depending on external datasets – cultural values can be preserved, and the risk of misuse is reduced. This approach not only keeps sensitive information secure but also allows communities to benefit from the opportunities AI offers without compromising their principles.

What’s the first step to start a community-led AI project?

The starting point is to ground the project in community sovereignty and values. Set up governance structures that prioritize community control over their data and ensure AI applications align with their cultural priorities. Engage community members from the outset to help shape the project’s goals and protect their data rights. This method ensures the initiative honours and addresses the community’s distinct needs and ambitions.