GDPR Compliance for Image Recognition Systems

GDPR compliance is mandatory for any organization processing personal data of EU residents, including Canadian businesses using image recognition technology. Non-compliance can lead to fines up to €20 million or 4% of global revenue. Image data, especially when processed into biometric identifiers, is classified as highly sensitive under GDPR and requires strict safeguards.

Key points to know:

- Personal Data: Images that identify individuals directly (e.g., clear facial features) or indirectly (e.g., tattoos, backgrounds) fall under GDPR.

- Biometric Data: Special category data, like facial geometry or fingerprints, requires explicit consent or a strong legal justification.

- Legal Bases: Explicit consent, legitimate interests, or contractual necessity are common grounds for processing image data.

- Automated Decisions: Systems like facial recognition must include human oversight to comply with GDPR’s Article 22.

- Anonymization: Techniques like Gaussian blurring or AI-driven anonymization are crucial to protect privacy and meet regulations.

- Technical Measures: Encryption, audit trails, and automated consent systems help ensure compliance.

- DPIAs: Data Protection Impact Assessments are required for high-risk activities, such as biometric data processing.

Next Steps: Canadian businesses should align their image recognition systems with GDPR by implementing proper safeguards, conducting DPIAs, and ensuring compliance with both EU and domestic privacy laws.

GDPR-Sensitive Data in Image Recognition

What Counts as Personal Data in Images?

Under GDPR, images are considered personal data if they allow for direct or indirect identification of an individual. While not every photograph is subject to GDPR, the bar for what qualifies is lower than many organisations might expect. Direct identification is straightforward – think clear shots of someone’s face, a visible name tag, or other obvious identifiers. But even indirect identifiers, such as facial features, distinctive tattoos, unique clothing, or background details that hint at a location, can make an image personal data.

For instance, images with facial resolutions above 40 pixels often allow reliable identification. Even lower-resolution images may fall under GDPR if the context makes identification possible. Context is everything – additional details in the photo or accompanying data could turn an otherwise non-identifiable image into personal data.

Some images may also reveal sensitive information, such as racial or ethnic origin, religious beliefs, health conditions, or sexual orientation. However, GDPR Recital 51 clarifies that photographs are not automatically treated as special category data unless processed using specific technologies aimed at unique identification.

This understanding of personal data sets the stage for the stricter rules that apply to biometric data.

Biometric Data and Its Special Classification

Biometric data is subject to even tighter regulations because it involves unique identifiers. The distinction between a regular photograph and biometric data lies in how the image is processed and for what purpose. According to Article 4 of GDPR, biometric data refers to "personal data resulting from specific technical processing relating to the physical, physiological or behavioural characteristics of a natural person, which allow or confirm the unique identification of that natural person". This includes things like facial geometry, fingerprints, iris patterns, voice traits, and even behaviours like how someone types or walks.

For example, an employee ID photo stored in a directory is simply personal data. However, if that photo is converted into a biometric template – say, for facial recognition – it becomes special category data. The ICO highlights that any use of data to uniquely identify an individual qualifies as processing special category biometric data.

This classification has serious consequences. Article 9 of GDPR prohibits processing special category data by default unless specific conditions are met. These conditions include obtaining explicit consent or showing a compelling public interest that is carefully balanced against privacy risks. And this applies even if the data is processed momentarily before deletion. For Canadian companies using image recognition systems, understanding these distinctions is crucial to ensuring compliance.

sbb-itb-fd1fcab

Legal Bases for Processing Image Data

Explicit Consent and Its Role in Compliance

When handling image data for unique identification, organisations must comply with both Article 6 and Article 9 of the GDPR. Specifically, explicit consent under Article 9(2)(a) is required. This type of consent goes beyond the standard, demanding a clear affirmative action. Simply relying on pre-checked boxes or implied agreement won’t cut it. Consent must meet strict criteria – it has to be freely given, specific, informed, and unambiguous.

One common pitfall is failing to ensure that consent is genuinely voluntary. To achieve this, organisations need to provide alternatives to biometric systems, like key cards or PIN codes. Moreover, businesses must maintain detailed records of consent, including timestamps and the exact wording used. This is crucial because individuals have the right to withdraw their consent at any time – and doing so should be just as simple as giving it in the first place.

Alternatives to Consent for Data Processing

Explicit consent isn’t always practical, so other legal bases may be required. One such option is legitimate interests under Article 6(1)(f), often used for security measures like CCTV. However, this approach demands a thorough three-part assessment: identifying a legitimate interest (e.g., theft prevention), proving that processing is necessary, and balancing this interest against individuals’ privacy rights. While this works for general security footage, it usually can’t be the sole basis for processing sensitive biometric data under Article 9.

Another option is contractual necessity under Article 6(1)(b). This applies when processing is essential for delivering a service, such as using an employee’s ID photo for building access. But this basis has limits – it can’t be stretched to cover unrelated purposes like AI training or improving services. For example, in July 2021, Spain’s Data Protection Authority (AEPD) fined the supermarket chain Mercadona €2.52 million for using facial recognition in 40 stores. The AEPD ruled that preventing theft, while important, didn’t meet the "substantial public interest" threshold required for processing customers’ biometric data.

Other legal grounds include legal obligation under Article 6(1)(c), which applies when processing is required by EU or national law, such as for Anti-Money Laundering (AML) checks. Similarly, public task under Article 6(1)(e) applies to public authorities performing statutory duties, like municipal surveillance or traffic enforcement. For biometric data, Article 9(2)(g) allows processing for substantial public interest, but only within a strong legal framework and with specific safeguards. For instance, in August 2020, the UK Court of Appeal ruled that South Wales Police‘s use of Live Facial Recognition (LFR) was unlawful due to insufficient legal clarity and a flawed Data Protection Impact Assessment (DPIA).

GDPR’s Article 22 and Automated Decision-Making

In addition to selecting a lawful basis for processing, organisations must also navigate GDPR rules on automated decision-making. Article 22 grants individuals the right not to be subjected to decisions based solely on automated systems that have legal or similarly significant effects. This includes profiling through image recognition technologies. For example, if a facial recognition system denies building access, flags someone as a security risk, or influences hiring decisions, meaningful human involvement is required.

"A high-probability ‘match’ from an FRT system is not factual proof of identity but an algorithmic suggestion. This necessitates robust human oversight." – EUGDPR.ai Staff

While automated tools can assist decision-making, a human must review the system’s output and have the authority to override it. The reviewer should understand the system’s mechanics and consider context that the algorithm might miss. Since facial recognition provides a statistical likelihood rather than absolute certainty, human oversight is both a legal necessity and a practical measure to prevent errors and discrimination.

Comply or Die: Breaking Down the GDPR, Privacy by Design, and the EU AI Act

Anonymization Techniques for GDPR Compliance

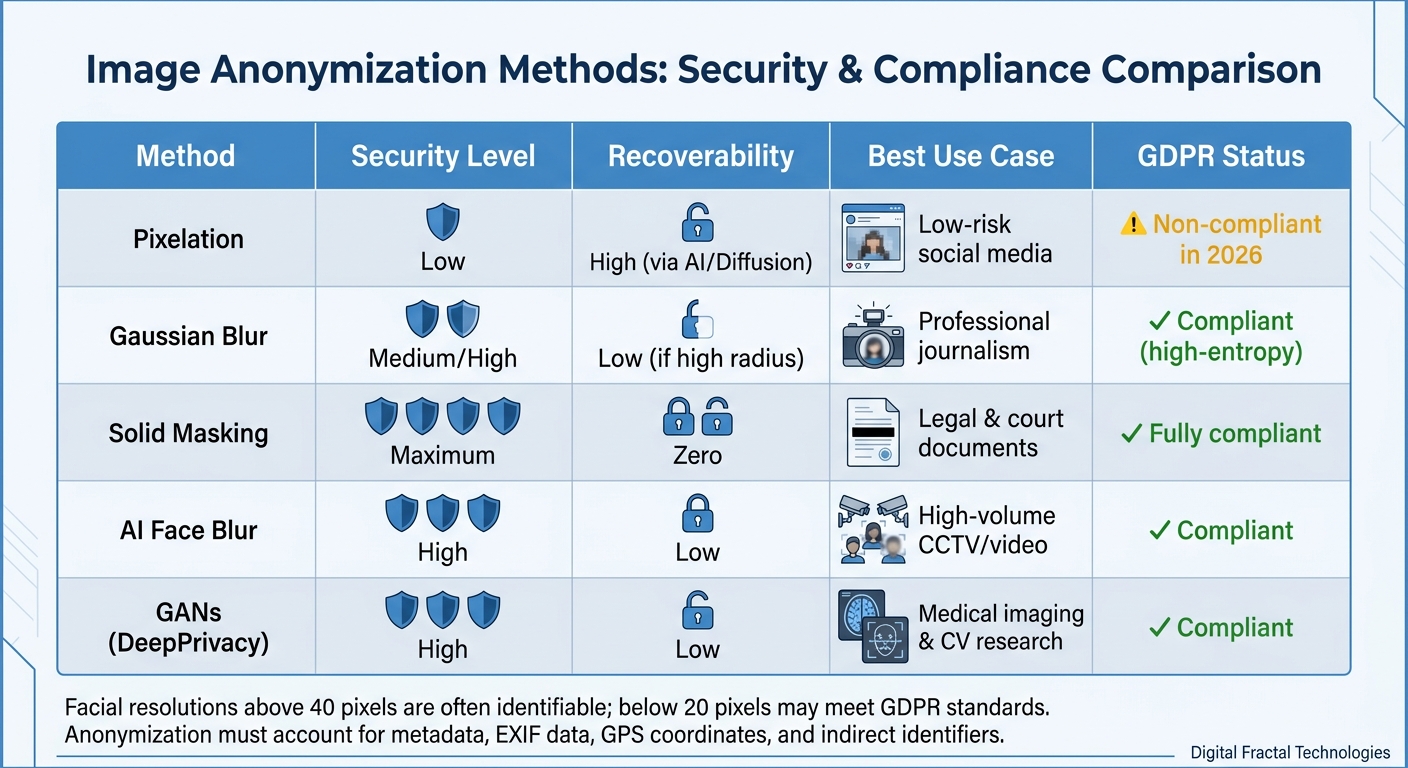

Image Anonymization Methods Comparison for GDPR Compliance

Techniques for Anonymizing Image Data

Anonymizing data isn’t just a good idea – it’s required by law. When data is truly anonymized, it’s no longer covered by GDPR because it cannot be traced back to an individual. The tricky part? Proving that your method of anonymization holds up under the "means reasonably likely" test, which evaluates the potential for re-identification using available technology.

Take pixelation, for example. In 2026, relying on this method is risky because AI models can now reconstruct faces from pixelated images. Instead, organisations should turn to methods like high-entropy Gaussian blurring or solid-fill masking, which make biometric data impossible to reverse-engineer. For instance, images with facial resolutions above 40 pixels are often identifiable, while those reduced to below 20 pixels may meet GDPR standards.

"The shift we are seeing in 2026 is a move away from ‘good enough’ privacy toward ‘provable’ privacy. If your privacy masking doesn’t account for metadata and reflection-based re-identification, you are non-compliant."

– Marcus Thorne, Senior Data Privacy Auditor

True anonymization goes beyond just obscuring faces. It also involves removing EXIF data, GPS coordinates, device identifiers, and even indirect clues like tattoos, name tags, or reflections. A study of 1.4 million images in the ILSVRC dataset revealed that 17% of the images featured identifiable faces, highlighting how challenging this process can be.

| Method | Security Level | Recoverability | Best Use Case |

|---|---|---|---|

| Pixelation | Low | High (via AI/Diffusion) | Low-risk social media |

| Gaussian Blur | Medium/High | Low (if high radius) | Professional journalism |

| Solid Masking | Maximum | Zero | Legal & court documents |

| AI Face Blur | High | Low | High-volume CCTV/video |

| GANs (DeepPrivacy) | High | Low | Medical imaging & CV research |

These methods set the foundation for advanced, AI-driven anonymization.

AI Tools for Image Anonymization

Modern AI tools take these techniques to the next level, combining precision and efficiency. Tools powered by Convolutional Neural Networks (CNNs) can identify faces, licence plates, and even full bodies with remarkable accuracy. This automation slashes processing times from hours to mere minutes, making it possible for organisations to handle millions of images while staying GDPR-compliant.

For example, SEGULA Technologies used brighter AI‘s Precision Blur to automate anonymization, saving thousands of hours and ensuring GDPR-compliant data transfers. Similarly, 360GEO leveraged the Celantur SDK, achieving an eightfold increase in processing speed for anonymized visual data workflows. These examples highlight how AI tools can balance speed with comprehensive development solutions and regulatory compliance.

Some advanced solutions, like those using Generative Adversarial Networks (GANs), go beyond basic blurring. Tools such as DeepPrivacy and CLEANIR generate synthetic faces to replace real ones, maintaining attributes like age, gender, and expression without risking identification. In fact, implementing these techniques on large datasets like ImageNet has shown that anonymization can be achieved with minimal accuracy loss – less than 0.68% – for recognition models.

When choosing an AI tool, look for solutions offering on-premise processing to ensure sensitive data remains secure. Tools like vAnonymize support offline processing, which is essential for organisations handling highly sensitive footage. Additionally, ensure the tool provides thorough documentation for Data Protection Impact Assessments (DPIAs) and operates on EU-based infrastructure to meet GDPR requirements. Above all, the anonymization must be irreversible, preventing any chance of data recovery.

Technical and Organizational Measures for GDPR Compliance

Data Security Measures

Securing personal data is at the heart of GDPR compliance. One of the most effective defences is encryption – both for data at rest and in transit. Using protocols like TLS 1.3 ensures personal data, including biometric templates, stays protected. Beyond encryption, implementing Role-Based Access Control (RBAC) and multi-factor authentication (MFA) limits access to sensitive visual data to only those who are authorised.

To maintain accountability and detect any unauthorised actions, comprehensive audit trails are essential. These logs track data access, modifications, failed login attempts, and deletions. Additionally, automated systems, including AI enhanced mobile and web apps, now play a crucial role in efficiently managing consent and the data lifecycle, complementing these security measures.

Automated Consent and Deletion Systems

Automating consent verification and data deletion processes makes GDPR compliance more seamless. Many image recognition systems now work alongside Consent Management Platforms (CMPs). These platforms automatically verify whether consent has been granted before processing or publishing images. By linking images to metadata, such as consent records, timestamps, and legal bases, CMPs simplify tasks like generating subject access reports and tracking consent over time.

Systems can also be configured to automatically delete images after a specified retention period (e.g., 30 days for security footage) or immediately upon consent withdrawal. If immediate deletion from backups isn’t feasible, the data should be rendered inactive to prevent further use.

"Consent management forms the foundation of compliant image processing." – GDPR Local

Conducting Data Protection Impact Assessments (DPIAs)

Technical safeguards alone aren’t enough; Data Protection Impact Assessments (DPIAs) are critical for identifying and mitigating risks in data processing activities. Under Article 35 of the GDPR, conducting a DPIA is a legal requirement for processing activities that pose a high risk to individuals’ rights and freedoms. This is especially relevant for image recognition systems, which often involve new technologies, large-scale profiling, and biometric data processing. Non-compliance can result in fines of up to €10 million or 2% of an organisation’s global annual turnover.

A thorough DPIA should include:

- A detailed description of the processing activities

- An assessment of necessity and proportionality

- An evaluation of risks to individuals’ rights

- Measures to mitigate those risks

For image recognition, this might involve reviewing training data for bias, using encryption and anonymisation, and ensuring human oversight. Treat the DPIA as a dynamic document – update it with every system deployment or functional change. Importantly, if residual risks remain after implementing safeguards, you must consult your national supervisory authority before moving forward.

Conclusion and Next Steps

Main Takeaways on GDPR Compliance

When it comes to GDPR compliance, aligning your image recognition systems is not just a recommendation – it’s mandatory. Non-compliance can result in hefty fines, so understanding the essentials is critical. Start with proper data classification and ensure you have a lawful basis under Article 6. This could mean obtaining explicit consent for marketing purposes or relying on legitimate interests for security measures. For higher-risk activities, like live facial recognition or large-scale surveillance, conducting Data Protection Impact Assessments (DPIAs) is crucial to identify and mitigate risks.

You’ll also need to implement technical and organisational measures, such as audit trails, data deletion protocols, and mechanisms to uphold individual rights. Use appropriate retention schedules – like keeping CCTV footage for 30 days or marketing images for 2–3 years. To comply with Article 22, ensure human oversight in automated decision-making processes, particularly for tasks like facial matching.

"Biometric information… is sensitive in almost all circumstances because it is intrinsically, and in most instances permanently, linked to the individual." – Office of the Privacy Commissioner of Canada (OPC)

These steps not only help you stay compliant but also set the foundation for scalable and effective solutions.

How Digital Fractal Technologies Can Help Your Business

Digital Fractal Technologies Inc is here to turn GDPR compliance into a strategic advantage. Their AI Readiness Audits offer a detailed review of your systems, processes, and potential risks, providing a clear 6–12 month roadmap to ensure compliance. These audits help you prepare for a seamless transition to compliant operations.

The company also excels in creating custom image and video processing systems tailored to your needs. They focus on data sovereignty by keeping sensitive visual data within your controlled environment. Through on-device inference, their solutions allow apps to process visual data locally, reducing the risks tied to transferring personal information to external servers.

With over four years of experience and a strong presence in Alberta, Digital Fractal Technologies has worked with industries like the public sector, construction, and heavy industry. Their team is skilled at integrating computer vision into existing platforms, ensuring compliance and efficiency. They deliver solutions that not only meet your regulatory requirements but also align seamlessly with your operational goals.

FAQs

When do images become biometric data under GDPR?

Under GDPR, images are considered biometric data when they are processed using specialised techniques to identify individuals based on unique physical or behavioural characteristics. For example, facial images used in recognition systems fall into this category. The classification hinges on both the purpose and the technology involved in the processing.

Can we use image recognition without explicit consent?

Under GDPR, processing biometric data through image recognition to uniquely identify individuals is typically not allowed without explicit consent. This type of data is classified as sensitive personal information, and handling it requires either clear consent from the individual or a solid legal basis to meet GDPR requirements.

How do we prove our image anonymization is irreversible?

To ensure your image anonymization process is truly irreversible, it’s crucial to remove or obscure all identifiable features in a way that makes reconstruction of the original data impossible. Use techniques like pixelation, blurring, or masking that permanently alter the image. Document every step of the process thoroughly, including the tools and methods used. This not only demonstrates your commitment to privacy but also helps meet GDPR compliance standards, showing adherence to strict privacy regulations.