Best Practices for AI Vulnerability Management

Managing AI vulnerabilities is no longer optional. With the rapid rise of AI integration across industries like finance, healthcare, and public services, risks such as data poisoning, model drift, and prompt injection are becoming more frequent and impactful. Traditional security tools can’t keep up with the speed and complexity of AI-specific threats. Here’s what you need to know:

- AI-specific risks include poisoned data, stolen models, and malicious prompts that can lead to skewed outputs or intellectual property theft.

- Detection tools need to go beyond static scanning, using behavioural monitoring and anomaly detection to identify issues like logic flaws and polymorphic malware.

- Regulations in Canada, such as PIPEDA and Quebec’s Law 25, require quick reporting of AI-related breaches, with hefty fines for non-compliance.

- Lifecycle approach: Effective AI security involves continuous monitoring, regular testing, and structured vulnerability management from design to decommissioning.

AI systems require tailored security strategies, combining frameworks like NIST AI RMF and ISO/IEC 42001 with advanced detection tools and strict data governance. Canadian organizations should prioritize risks based on business impact, regulatory compliance, and evolving threats. Let’s dive into how to secure AI systems effectively.

Core Principles of AI Vulnerability Management

What AI Vulnerability Management Covers

AI vulnerability management spans the entire AI ecosystem, addressing risks at every layer – from training data pipelines to user interfaces. Each stage introduces potential vulnerabilities that can undermine the system if left unchecked.

Key areas of focus include:

- Training data pipelines: Poisoned inputs can alter model behaviour.

- Model artifacts: These trained models are susceptible to theft or tampering.

- Deployment infrastructure: This includes servers, cloud environments, and hosting configurations, all of which can be targeted.

- APIs: Attackers may exploit these to extract models or inject malicious prompts.

- Application code and user interfaces: Unpredictable inputs from users can introduce risks.

Beyond technical vulnerabilities, ethical concerns like algorithmic bias and opaque decision-making come into play. A notable example is the September 2025 Workday lawsuit, where an HR technology company faced legal challenges for eliminating human oversight in its AI-driven candidate screening process.

By addressing these layers comprehensively through AI consulting services, organizations can align their practices with established AI security frameworks and standards.

Frameworks and Standards for AI Security

Canadian organizations can rely on several established frameworks to guide their AI security efforts. Here’s a comparison of key standards:

| Framework | Nature | Primary Focus | Canadian Relevance |

|---|---|---|---|

| NIST AI RMF | Voluntary | Practical risk management across the AI lifecycle: Govern, Map, Measure, Manage | Recommended by the Canadian Centre for Cyber Security |

| ISO/IEC 42001:2023 | Certifiable standard | AI Management System (AIMS) requirements; auditable | Aligns with Canada’s Voluntary Code of Conduct |

| MITRE ATLAS | Threat matrix | Adversarial tactics, techniques, and procedures (TTPs) | Useful for red-teaming and threat modelling |

| Canada Voluntary Code | Voluntary | Focuses on safety, transparency, and accountability for generative AI systems | Directly relevant to Canadian organizations |

The NIST AI Risk Management Framework (AI RMF) stands out for its practical application in engineering and security. It operates as an ongoing cycle with four core functions: Govern, Map, Measure, and Manage. According to Palo Alto Networks:

"The ‘Govern’ function emphasizes the cultivation of a risk-aware organizational culture, recognizing that effective AI risk management begins with leadership commitment."

For organizations in regulated industries, combining NIST AI RMF with ISO/IEC 42001 provides both actionable guidance and an auditable framework, helping meet OSFI compliance requirements.

However, adopting these frameworks is just the start. Managing AI vulnerabilities requires a consistent and structured approach, as outlined in the lifecycle below.

The Vulnerability Management Lifecycle

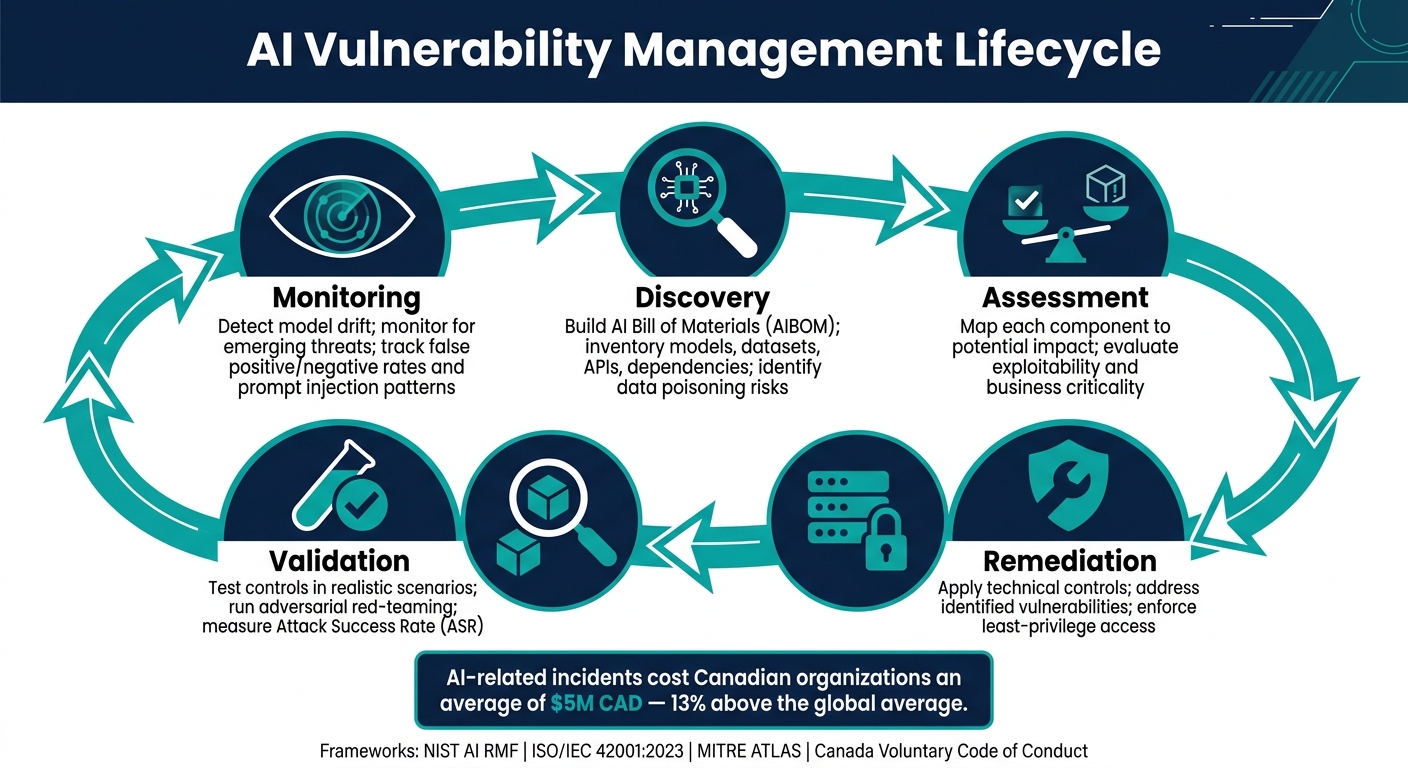

Managing AI vulnerabilities is not a one-and-done task – it’s an ongoing cycle that begins at the design phase and continues until decommissioning.

"Safe and responsible AI governance begins with development, which includes preliminary stages such as ideation, planning, and design." – Innovation, Science and Economic Development Canada

This lifecycle consists of five interconnected stages:

- Discovery: Start with an AI Bill of Materials (AIBOM) that inventories every model, dataset, third-party API, and dependency. This step identifies risks like data poisoning by tracking elements such as model weights and training datasets.

- Assessment: Map each component to its potential impact, considering how vulnerabilities might affect the system.

- Remediation: Apply technical controls to address identified vulnerabilities.

- Validation: Test the effectiveness of these controls in realistic scenarios.

- Monitoring: Keep an eye out for emerging threats and model drift – the gradual decline in performance as new real-world data diverges from the original training data.

Each phase is critical for maintaining system integrity. This structured approach aligns with Canadian regulatory expectations, where incidents involving AI systems result in average costs of $5 million CAD – about 13% higher than the global average for data breaches.

sbb-itb-fd1fcab

Integrating AI into Vulnerability Management Frameworks | Exclusive Lesson

Detecting and Mitigating AI Vulnerabilities

AI Vulnerability Management Lifecycle: 5 Key Stages

Types of AI Vulnerabilities by Layer

AI systems face risks at every layer, and identifying these vulnerabilities is crucial to addressing them before they lead to problems.

At the data layer, common issues include data poisoning and sensitive data leaks. A well-known example is the Samsung incident from early 2023. Over just 20 days, employees unintentionally shared sensitive source code and hardware details with a generative AI tool three separate times. This led Samsung to temporarily ban the use of such tools entirely.

The model layer introduces its own threats. For instance, serialization vulnerabilities in Python’s pickle format are particularly concerning. This format allows arbitrary code execution through the __reduce__ method, meaning a malicious file could silently execute system commands when loaded. Another risk is "sleeper agent" poisoning, where a model appears normal but activates harmful behaviour when triggered by a specific input. At the pipeline and infrastructure layers, attackers often exploit supply chain weaknesses. This can include poisoned pre-trained models from public repositories, malicious dependencies, or prompt injection via untrusted documents or emails.

| Layer | Key Vulnerability | Example Threat |

|---|---|---|

| Data | Data poisoning, PII leakage | Backdoor embedding via 0.1% corrupted data |

| Model | Serialization flaws, sleeper agents | Malicious Pickle files executing on load |

| Pipeline | Supply chain compromise | Poisoned models from public repositories |

| Infrastructure/Interface | Prompt injection, tool coercion | CamoLeak exploit in GitHub Copilot (October 2025) |

Recognizing these vulnerabilities is the first step towards implementing effective AI detection strategies.

How AI-Powered Detection Tools Work

Traditional security scanners often fall short when it comes to AI-specific threats, such as prompt injection, model extraction, or data poisoning. AI Security Posture Management (AI-SPM) tools have emerged to fill this gap. These tools continuously monitor for unmanaged "Shadow AI" – AI tools embedded in software like IDEs, browsers, and word processors that developers might use without IT approval.

"The challenge with AI tools is their ubiquity. They are built into browsers, IDEs, word processors, and email clients. Developers are not seeking out AI – it is already present in their tools, waiting to be used." – Anjali Gopinadhan Nair, Author

Detection relies on multiple techniques. Static analysis and runtime monitoring, such as prompt firewalls, help identify vulnerable code patterns and filter inputs and outputs in real time. Anomaly detection can flag unusual patterns like "entropy collapse", where highly predictable outputs suggest a malicious trigger. For model files, there’s a shift away from the pickle format to safetensors, which are designed to avoid serializing Python objects and executing embedded logic. Automated red-teaming tools also simulate attacks, like jailbreaks or data extractions, to uncover vulnerabilities before attackers can exploit them.

Integrating Automated Scanning into Development Workflows

To stop vulnerabilities from reaching production, it’s essential to integrate detection tools into the development process early. This aligns with the broader vulnerability management lifecycle, where shift-left security – embedding checks into the CI/CD pipeline – plays a key role.

Here are some practical steps:

- Use pre-commit hooks to scan for secrets or sensitive patterns and block them before they are committed.

- Set up policy-as-code gates to prevent unapproved model artifacts or prompt changes.

- Maintain an AI Bill of Materials (AIBOM) to track the provenance of every model, dataset, and dependency.

Additionally, version control should cover not just source code but also dataset snapshots, model configurations, and weights. This allows for quick rollbacks if a poisoned artifact is detected.

It’s important to avoid over-reliance on AI scanner confidence scores. Calibrate these scores against a labelled dataset of known issues to reduce false positives and prevent unnecessary distractions. Before applying new security rules across the board, use canary deployments – testing them on a less critical project first to measure their effectiveness and impact on developers. As Gartner points out, one common mistake in vulnerability management is overwhelming operations teams with lengthy reports of vulnerabilities without context or prioritization.

Prioritizing Vulnerabilities and Managing Threats

Risk-Based Prioritization

After identifying vulnerabilities, the next hurdle is deciding which ones to address first. Relying solely on generic CVSS scores can be misleading since they don’t account for the importance of specific components. A more targeted approach combines three elements: a severity score (blending CVSS, EPSS, and community trends), a component score (measuring the criticality of the affected component), and a topic score (grouping related components using clustering methods).

A great example of this in action is Databricks’ "VulnWatch" system. By using large language models, it matches CVEs to internal libraries based on vector similarity. This innovative method has cut manual triage workload by 95% and achieved a 0% false negative rate in back-tested data.

Time is also a critical factor. The gap between discovering a vulnerability and it being actively exploited has shrunk dramatically – from weeks to mere hours. Daphne Lucas, National Leader for Cyber Security at Deloitte Canada, highlights this urgency:

"Discovery now outpaces decision-making."

To keep pace, organizations should aim to make decisions on critical vulnerabilities within 48 hours. This means either formally accepting the risk with documented reasoning or starting remediation immediately.

Threat Modelling for AI Systems

Building on risk-based prioritization, threat modelling takes it a step further by refining how organizations respond to vulnerabilities. Traditional threat modelling, designed for deterministic systems, struggles to address the probabilistic nature of AI, requiring a new approach.

The first step is identifying what needs protection: user safety, data confidentiality, output accuracy, and the system’s boundaries. Pay special attention to points where untrusted external data enters the system, as these are often the most vulnerable. One critical area is the prompt pipeline, where instructions, memory, and retrieved context intersect.

AI threat modelling must consider both intentional attacks and accidental failures. For example, prompt injection attacks can succeed in 50–90% of unprotected systems, and nearly half (48%) of AI systems have been found to leak training data. To mitigate risks from unexpected model behaviour, high-impact actions should be backed by kill-switches, emergency shutdown protocols, and rate limits. As Microsoft Security explains:

"Residual risk for AI systems is not a failure of engineering; it is an expected property of non-determinism."

Business Impact in Key Canadian Industries

Not every vulnerability carries the same weight, and the stakes vary significantly across industries. In fields where AI failures could disrupt infrastructure or endanger public safety, quick action is essential.

Take the energy and utilities sector, where IT and OT systems are deeply interconnected. A vulnerability that might seem minor in AI enhanced mobile and web apps could have severe consequences if it affects systems monitoring pipelines or managing grid loads. Here, assessing risks must include the potential for physical disruptions alongside data breaches.

In the public sector, key concerns include national security, sovereignty, and protecting citizens from AI-driven fraud. The Canadian AI Safety Institute (CAISI) provides guidance to help government agencies evaluate and address these risks. Similarly, the Office of the Superintendent of Financial Institutions (OSFI) has flagged AI model risk as a top concern for Canadian financial institutions. It warns against overreliance on automated outputs and the dangers of model drift. As OSFI notes:

"Poorly governed AI systems can produce unreliable or unexpected results, potentially leading to operational disruption, financial loss, legal exposure, and reputational harm."

For organizations in these sectors, conducting a "crown jewels" inventory – an audit to pinpoint the most critical AI components – is a practical way to prioritize remediation. This ensures that the focus remains on protecting operations, customers, and public trust.

This combined approach to risk prioritization and threat modelling fits seamlessly into the broader AI vulnerability management lifecycle.

Designing Secure and Resilient AI Systems

Secure Hosting and Infrastructure Practices

Creating a secure environment starts with isolating AI workloads. This approach limits the chances of exploitation by ensuring that even if one part of the system is compromised, attackers can’t easily access model weights or training data.

One critical measure is to separate training environments from production systems. This segmentation prevents lateral attacks, where a breach in one area could lead to broader exposure across the network.

Another key practice is using cryptographic signing for models, code, and build artefacts. This step ensures integrity and guards against tampering. The 2024 NullBulge supply chain attack is a cautionary tale: attackers injected malicious Python payloads into AI tools hosted on platforms like GitHub and Hugging Face. This led to stolen data and ransomware infections in downstream systems. A strong AI Bill of Materials (AIBOM), combined with signed artefacts, could have made such an attack far harder to execute.

All these measures align with the principles of Zero Trust Architecture. This framework assumes no user or system is inherently trustworthy and requires verification at every interaction, from data ingestion to model inference.

"AI must be Secure by Design. This means that manufacturers of AI systems must consider the security of the customers as a core business requirement, not just a technical feature." – CISA

To further protect your AI systems, pair secure infrastructure with strict access controls.

Identity and Access Management

Adopting the principle of least privilege is essential for protecting AI systems. Role-Based Access Control (RBAC) should extend beyond user logins to include model artefacts, training datasets, and audit logs. It’s critical to tightly regulate access to audit logs – only those with a legitimate need should have permission to view records of AI activity. For sensitive tasks, combine RBAC with Just-In-Time (JIT) access, which grants temporary, time-limited permissions. This approach minimizes the risk if credentials are compromised.

For service-to-service communication, mutual TLS (mTLS) with unique service identities ensures that only authenticated systems can interact with your AI models. Avoid embedding API keys or tokens directly into code. Instead, rely on a Hardware Security Module (HSM) or a centralized secret management system to rotate credentials without requiring a full system redeployment.

The rise of AI-powered deepfake impersonation has made phishing-resistant multi-factor authentication (MFA) a necessity. In one 2024 incident, a British engineering firm lost CA$25 million after its CFO was impersonated via a deepfake video. MFA is no longer optional – it’s a baseline requirement.

Beyond access controls, ensuring data integrity is a cornerstone of secure AI systems.

Data Governance and Validation

Even the most secure infrastructure and access controls can’t protect your AI system if the data itself is compromised. Effective data governance safeguards against vulnerabilities like data poisoning and ensures the accuracy of the inputs driving your AI.

One of the best defences against data poisoning is data provenance tracking. This involves keeping a clear, auditable record of where your data comes from and how it has been processed. Without this, manipulations can slip through unnoticed until it’s too late. Alongside provenance tracking, implement data minimisation – collect only the personal information absolutely necessary for the AI’s purpose.

To further protect your system, sanitize user inputs. For example, enforce strict character limits (e.g., 300 characters) to reduce the risk of prompt injection or jailbreaking attacks. Automated tools can also help by redacting sensitive information, profanity, or potential threats before they reach your AI service.

For organizations in Canada, data governance must comply with the Artificial Intelligence and Data Act (AIDA) and the federal Privacy Act. Before deploying AI systems that handle personal data, consult the Treasury Board Secretariat’s Privacy Checklist to determine whether a Privacy Impact Assessment (PIA) is required. This isn’t just about meeting legal requirements – it’s a practical way to identify and address risks before they escalate into major issues.

Monitoring and Improving AI Systems Over Time

AI systems are not like traditional software – they evolve. A model that works well today might falter tomorrow, not because of code changes, but due to its probabilistic nature. Silent updates, shifting retrieval-augmented generation (RAG) bases, and evolving threats make one-time assessments insufficient. To address these challenges, continuous testing and retraining are essential to combat model drift effectively.

Adversarial Testing and Red-Teaming

Adversarial testing isn’t just about throwing a few test prompts at a system. The most effective method follows a "Probe-Measure-Harden" cycle. This involves systematically attacking the system, measuring results using metrics like the Attack Success Rate (ASR), and then strengthening defences.

For example, multi-turn attacks, where harmful input is introduced gradually, can achieve a jailbreak success rate of up to 97% within five turns. Single-turn testing often misses these nuanced approaches, making it vital to evaluate every interaction. A failure at turn five, even if the system recovers by turn eight, still counts as a breach.

"A 0% rate usually means your probes are too easy, not that your agent is perfectly safe." – Microsoft

Adversarial test datasets need regular updates – quarterly is recommended – since attack methods evolve quickly, and static datasets lose relevance. The urgency around this is evident in the AI red teaming services market, which is projected to grow from CA$1.43 billion in 2024 to CA$4.8 billion by 2029.

Automated Retraining and Safe Deployment

The insights gained from adversarial testing should feed directly into retraining efforts to keep models performing reliably. Behavioural drift is an ongoing concern, often caused by fine-tuning, prompt caching, or RAG updates. It’s crucial to set clear performance thresholds – such as drops in accuracy or bias triggers – that can automatically initiate retraining or flag a model for potential retirement.

When deploying updates, use methods like blue/green or canary releases, and include Human-in-the-Loop checkpoints. This approach reduces the risk of regression, where a new model version might accidentally reintroduce a previously fixed vulnerability. Routine updates can sometimes mask these risks, making careful oversight essential.

Runtime Monitoring and Compliance Readiness

Continuous runtime monitoring serves as the bridge between pre-deployment controls and post-deployment oversight. A strong strategy combines automated anomaly detection, user feedback, and periodic manual reviews. Key metrics to monitor include model drift, false positive/negative rates across demographic groups, security alerts for prompt injection patterns, and reports of hallucinations or unexpected outputs.

To manage alert fatigue, implement tiered alerting. Critical issues should trigger immediate alerts, high-severity findings warrant same-day notifications, and lower-priority concerns can be included in a weekly digest. Logging all anomalies and near-misses ensures precise root-cause analysis.

In Canada, rigorous runtime monitoring aligns with regulatory expectations, such as those outlined by ISED and the EU AI Act. The ISED Implementation Guide (March 2025) highlights a lifecycle approach that includes post-deployment oversight. Meanwhile, the EU AI Act, effective 2 August 2026, mandates adversarial testing and thorough risk management documentation for high-risk AI systems. Maintaining an up-to-date AI Bill of Materials (AIBOM) provides a clear view of all models, datasets, and dependencies in your system.

"OSFI has warned that poorly governed AI systems can produce unreliable or unexpected results, potentially leading to operational disruption, financial loss, legal exposure, and reputational harm." – Office of the Superintendent of Financial Institutions (OSFI)

Conclusion

AI vulnerability management isn’t a one-and-done task – it’s an ongoing effort. With the threat landscape constantly shifting, traditional security tools often fall short when it comes to addressing the unique risks AI systems bring to the table.

Key Takeaways

Let’s recap the essential principles from earlier.

Managing AI vulnerabilities effectively means treating security as a continuous process, not just a pre-launch formality. Every phase counts – from identifying models and datasets to real-time monitoring and retraining.

Risk-based prioritization is key. Instead of chasing every vulnerability, focus on those with the most significant exploitability and business impact. Automated workflows are helpful, but human oversight remains critical, especially when AI decisions have high stakes. Achieving visibility across your entire AI ecosystem, including shadow AI, is crucial to eliminating blind spots that attackers could exploit.

Consider this: in 2024, over 40,000 CVEs were reported – a 38% increase from the previous year. Meanwhile, ransomware attacks surged nearly 150% in early 2025, fuelled by AI-generated phishing schemes and polymorphic malware.

"Moving from reactive patching to a structured vulnerability management lifecycle transforms security from tactical firefighting to strategic resilience engineering." – Palo Alto Networks

Practical steps include maintaining an up-to-date inventory of AI components, enforcing least-privilege access, sanitizing inputs and outputs, and aligning your efforts with Canadian frameworks such as the CCCS "Top 10 AI Security Actions" and the Voluntary Code of Conduct.

These foundational elements complete the lifecycle approach we’ve explored.

How Digital Fractal Technologies Inc Can Help

Digital Fractal Technologies Inc provides solutions tailored to every phase of the AI lifecycle. Their expertise can help you design secure AI systems, implement inventory and supply chain safeguards, and set up automated monitoring to detect model drift and performance issues – ensuring your systems stay within acceptable risk limits.

Whether you operate in the public sector, energy, or construction, Digital Fractal’s custom AI consulting and development services are crafted to fit your unique needs. They go beyond generic templates, embedding human review controls into automated workflows and aligning your governance practices with standards like ISO/IEC 42001:2023 and the NIST AI Risk Management Framework. Their technical expertise and understanding of Canadian regulations can strengthen your AI security program.

FAQs

What should an AI Bill of Materials (AIBOM) include?

An AI Bill of Materials (AIBOM) is essentially an inventory of all the components involved in creating and deploying an AI model. This includes everything from the model itself to training data, dependencies, third-party tools, plugins, and APIs.

By documenting the origins, versions, and security status of these components, an AIBOM helps pinpoint potential risks. For example, it can reveal vulnerabilities like malicious dependencies or even data poisoning. Keeping an AIBOM current ensures better transparency and makes it easier to securely manage the AI supply chain. This approach aligns well with established practices for handling vulnerabilities effectively.

How can we detect prompt injection and model drift in production?

Prompt injection can be addressed by implementing multiple layers of protection. Key strategies include:

- Input validation: Scrutinize and sanitize user inputs to prevent malicious data from being processed.

- Egress restrictions: Limit the system’s ability to send or receive potentially harmful external data.

- Monitoring for unusual behaviour: Keep an eye out for any anomalies or unexpected actions that could signal an injection attempt.

Identifying Model Drift

Model drift occurs when an AI system’s performance starts to deviate from its original behaviour or accuracy. To detect this, it’s essential to:

- Track performance continuously: Regularly evaluate how the model performs in its production environment.

- Monitor behaviour: Look for changes in how the model interacts with inputs and outputs over time.

Staying vigilant through regular monitoring helps ensure that AI systems remain reliable and secure in their operations.

What’s the first step to align AI vulnerability management with Canadian privacy laws?

To start, it’s crucial to have a solid grasp of Canadian privacy laws and regulations. AI systems must be built with privacy, security, and ethical usage as core principles. Adopting established frameworks like the NIST guidelines for trustworthy AI can guide you in meeting compliance requirements while ensuring responsible and ethical deployment.