Real-Time AI for Cloud Cost Optimization

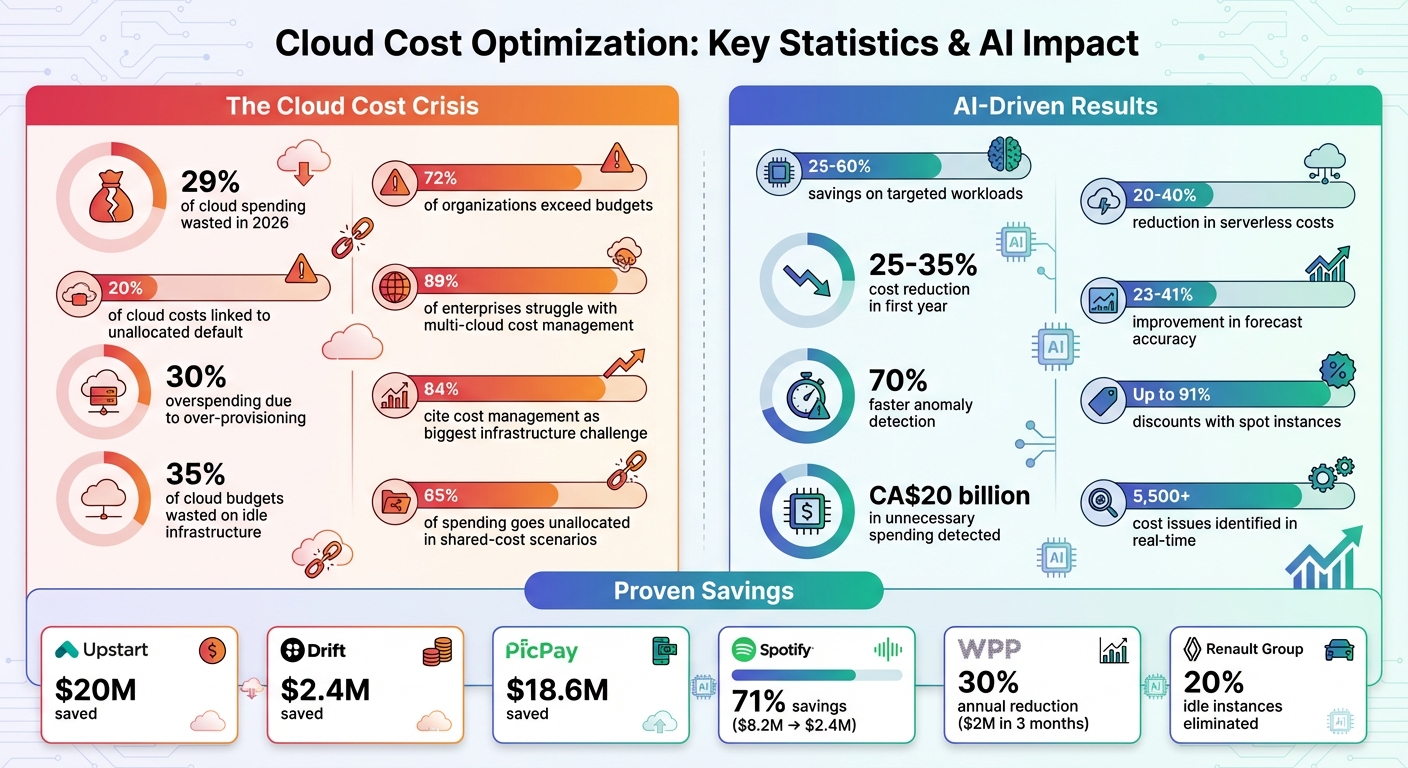

Cloud costs are spiralling, with 29% of spending wasted in 2026. AI workloads, requiring expensive GPUs and complex billing, are a major driver. Traditional tools can’t keep up, leaving 72% of organizations exceeding budgets. Real-time AI offers a solution by monitoring expenses continuously, detecting anomalies, and automating cost-saving actions. Businesses using these systems report savings of 25%–60% on targeted workloads.

Key challenges include:

- Over-Provisioning: Engineers often allocate excess resources, leading to 30% overspending.

- Unpredictable Spikes: AI-specific issues like token surges can double costs overnight.

- Multi-Cloud Complexity: 89% of enterprises struggle to manage costs across providers like AWS and Azure.

Real-time AI addresses these by:

- Monitoring in Real-Time: Unified dashboards show spending drivers.

- Anomaly Detection: Alerts flag unusual costs for immediate action.

- Automated Adjustments: AI rightsizes resources and manages idle workloads.

Digital Fractal Technologies specializes in creating custom AI tools for cloud optimization, providing tailored solutions for industries like energy and public services. Their approach follows a four-phase roadmap: gain visibility, analyse data, deploy AI-driven strategies, and establish predictive controls for sustained savings.

Cloud Cost Optimization Statistics: AI-Driven Savings and Challenges

How Does AI Automate Cloud Cost Optimization And Prediction? – Cloud Stack Studio

sbb-itb-fd1fcab

Common Cloud Cost Management Challenges

Keeping cloud costs under control is about much more than just monitoring your monthly bill. It’s about figuring out why expenses keep climbing, even when you think you’re being cautious. As AI workloads grow, three key challenges are becoming harder to avoid.

Over-Provisioning and Underutilization

Engineers often build web applications with extra capacity to ensure smooth performance, but these safety buffers can lead to massive waste. Leon Kuperman, Co-founder and CTO at Cast AI, explains:

"It’s not uncommon for engineers to buy more capacity than necessary just to sleep well at night."

In Kubernetes environments, developers often assign CPU and memory requests with large margins to handle peak loads. While this approach may seem practical for individual applications, it leads to significant system-wide inefficiencies. On average, this kind of over-provisioning results in 30% overspending.

The rise of AI workloads has made this problem worse. GPUs, for example, continue to rack up costs even when they’re sitting idle between jobs. Then there are hidden costs – like orphaned storage volumes, unused load balancers, and abandoned development clusters – that quietly drain budgets month after month.

While over-provisioning wastes resources, unpredictable usage spikes create a different kind of headache.

Unpredictable Cloud Usage Spikes

Cost spikes don’t just throw off budgets – they create panic. Unlike traditional software, where costs grow in line with user numbers, AI costs are much harder to predict. A small tweak, like changing a prompt or migrating a model, can send costs soaring without adding any extra value for users.

These spikes can come from many sources. Technical errors – such as leaving debug logs active, delayed autoscaling, or retry storms – can cause spending to spiral out of control. AI-specific issues, like token surges from oversized prompts or recursive agent calls that multiply API requests, add another layer of complexity.

The real challenge is the lack of real-time visibility. Cloud infrastructure works in milliseconds, but billing systems often lag behind, updating hours or even days later. By the time a spike is detected, the damage is already done. Traditional FinOps methods, like monthly forecasts and manual reviews, simply can’t keep up with the speed of today’s cloud environments. And distinguishing between legitimate growth and wasteful anomalies is nearly impossible without AI tools.

This issue becomes even more complicated when managing costs across multiple cloud providers.

Limited Visibility Across Multi-Cloud Environments

With 89% of enterprises now using multi-cloud setups, managing costs across platforms like AWS, Azure, and Google Cloud has become a logistical nightmare. Each provider has its own way of presenting billing data – AWS Cost and Usage Reports look nothing like GCP BigQuery exports.

The problem goes beyond billing. Inconsistent tagging across platforms makes it tough to track resource ownership, leaving finance teams unable to hold departments accountable. For example, in multi-tenant Kubernetes clusters, it’s common for 20% of cloud costs to be linked to default namespaces with no clear owner. Shared costs like NAT gateways, cross-region data transfers, and Kubernetes control planes often lack clear accountability, leading to what some experts call "shared-cost chaos", where up to 65% of spending goes unallocated. Data transfer fees alone can make up 10% to 15% of total cloud spending.

On top of that, managing discount commitments – like AWS Savings Plans, Azure Reservations, and GCP Committed Use Discounts – adds another layer of complexity. For 84% of organizations, cloud cost management is their biggest infrastructure challenge. Without unified visibility, effective cost control becomes almost impossible.

These challenges highlight why AI integration is becoming essential for real-time cost management across diverse cloud environments. Organizations seeking to navigate these complexities can benefit from expert AI insights to optimize their infrastructure.

How Real-Time AI Improves Cloud Cost Optimization

Real-time AI is changing the game for cloud cost management, moving away from delayed reviews and instead focusing on real-time cost control through custom workflow automation.

The common thread among challenges like over-provisioning, sudden cost spikes, and managing multiple cloud platforms is a lack of immediate visibility and context. Traditional tools often fall short because they rely on after-the-fact analysis, making it hard to distinguish between necessary growth and unnecessary waste.

Ben Johnson, Co-founder and CTO at Obsidian Security, sums it up perfectly:

"Every engineering decision is a cost decision".

Real-time AI brings these decisions to light as they happen. Instead of waiting for outdated reports, teams can now spot anomalies and fix costly mistakes before they snowball. For example, CloudZero has detected over 5,500 cost issues in real time, allowing organizations to take immediate action. Many companies using AI for optimization have cut serverless costs by 20% to 40% without sacrificing reliability.

Real-Time Monitoring and Unified Dashboards

AI-powered real-time monitoring pulls data from platforms like AWS, Azure, Google Cloud, and specialized tools such as Snowflake or Databricks into a single dashboard. This comprehensive view not only shows where money is being spent but also highlights the specific drivers behind those costs. Unlike traditional alerts that simply flag overspending, these dashboards provide the context needed to understand and address the problem.

This approach is often referred to as "Cost-Intelligent Observability". It combines telemetry, AI signals (like token counts), and financial data into one real-time interface. This is especially important for AI workloads, where costs can vary significantly based on factors like GPU usage or request volume. Real-time AI also enables automatic rightsizing, adjusting compute resources to match demand instead of relying on static, over-provisioned setups.

Unified dashboards set the foundation for tackling cost spikes through anomaly detection.

Anomaly Detection and Alerts

Real-time anomaly detection catches unusual spending patterns as they occur, giving teams the opportunity to intervene before the damage is done. Keith MacKenzie, Content Marketing Manager at CloudZero, explains:

"Scheduled reports reveal past spending. Real-time detection gives you a chance to stop it and change what happens next".

The savings can be massive. For instance, Upstart saved $20 million, Drift cut costs by $2.4 million, PicPay reduced cloud spending by $18.6 million, and Cribl achieved 70% faster anomaly detection with the RADEN AI agent.

Modern systems use a mix of simple alerts and advanced statistical models to account for seasonal trends, reducing false alarms. They also create a feedback loop, letting teams label anomalies as "true" or "planned", which helps refine future detection. For low-risk scenarios, automated "circuit breakers" can pause runaway jobs or suspend idle workloads after inactivity.

Granular Tagging for Cost Transparency

Tagging resources manually can be a tedious and error-prone task. AI-powered "Virtual Tags" (VTags) simplify this by automatically analysing metadata, labels, and namespaces. This turns technical data into actionable business metrics, such as cost per feature, per customer, or even per successful output.

Thalia Elie, FinOps Account Manager at CloudZero, advises:

"You don’t need perfect visibility to start getting value from AI cost tracking. But you do need to start".

A gradual approach works best: start with manual tagging for major projects, then use automated scripts for consistency, and finally aim to track over 90% of spending through AI-powered dashboards. AI can even apply tagging rules retroactively, offering insights into past spending for previously untagged resources.

Erik Peterson, Founder and CTO at CloudZero, shifts the focus to value:

"The key question is no longer, ‘How much did we spend?’ It’s now: ‘Was it worth it?’".

By tagging AI models based on their stage – training, inference, or fine-tuning – teams can set spending benchmarks and quickly spot unexpected costs in specific phases. This level of precision is invaluable, especially as 44% of engineering professionals cite improving AI explainability as a top priority in their budgeting efforts.

These AI-driven tools address the core issues of over-provisioning, unpredictable cost spikes, and the complexity of managing multi-cloud environments. Together, they bring clarity and control to cloud cost management.

AI-Driven Cloud Optimization Techniques

AI doesn’t just monitor cloud usage; it actively transforms how resources are managed, scheduled, and allocated. These techniques take raw data from real-time monitoring and turn it into actionable cost-cutting strategies – all while keeping performance intact.

Intelligent Rightsizing

AI-powered rightsizing tools continuously assess CPU, memory, and I/O usage to recommend configurations that align with actual workload demands. Unlike static snapshots, these tools analyse data over 7–14 days, capturing patterns like weekly peaks and seasonal trends. This dynamic approach ensures recommendations are based on real-world usage.

To avoid degrading performance, these systems use multi-dimensional safety signals – such as latency and error rates – as safeguards. For example, AWS Compute Optimizer users have reported cost reductions of up to 25% by following its machine-learning-based suggestions.

Automation plays a big role here. AI agents can pause idle warehouses, resize oversized clusters, and eliminate "zombie" resources – those unused but still running – which account for 28–35% of cloud spending. Automating low-risk tasks, like shutting down non-production environments during off-hours, can slash costs in those areas by up to 70%.

Arman Aggarwal, Business Head at CloudKeeper, explains it well:

"AI is redefining what cloud optimisation looks like in practice. By combining real-time analytics, predictive intelligence, and automated action, AI turns cloud cost management into a strategic capability rather than a reactive task".

Predictive Demand Forecasting

Predictive demand forecasting shifts cloud management from reactive to proactive. By analysing historical trends and seasonal usage patterns, machine learning models can predict future resource needs. This enables predictive scaling, where resources are allocated just before a spike occurs, ensuring smooth performance without over-provisioning.

AI also identifies idle periods, scaling down non-production workloads when they’re not needed. Forecasting tools even allow "what-if" modelling, so businesses can simulate the financial impact of scenarios like doubling their user base before making changes.

Take the Renault Group in 2025, for instance. Using Google Cloud’s Active Assist across 140 projects, they discovered nearly 20% of their Cloud SQL instances were idle. Acting on these insights saved them both money and engineering hours previously spent on manual cleanup. Similarly, advertising giant WPP saved $2 million in just three months by adopting AI-driven cloud governance and scaling strategies, eventually cutting their annual cloud costs by 30%.

Automated Workload Scheduling

AI doesn’t just predict demand – it adjusts workloads accordingly. By analysing traffic patterns, it can reschedule batch jobs to run during off-peak hours when cloud costs are lower. This approach, often called "load shaping", helps businesses get the most out of cost-effective cloud options.

Pinterest is a great example. They developed an internal machine learning system to monitor traffic and manage workloads. This system automated infrastructure scaling and instance resizing, saving them tens of millions of dollars annually on their AWS expenses.

AI also tackles idle resources, like unused virtual machines or orphaned storage, by shutting them down or deleting them automatically. The process can start small, with AI providing cost-saving recommendations, and gradually move toward full automation for stable, non-production workloads.

Spot Instance Management

Spot instances offer steep discounts – up to 91% compared to on-demand pricing. However, they come with a catch: they can be interrupted with just two minutes’ notice. AI-driven systems address this by predicting interruption risks and managing "Spot Fleets" across multiple instance types and regions, ensuring availability.

Machine learning models also predict interruptions, enabling proactive migration or rescheduling. Checkpointing systems, which save progress every 10–30 minutes, allow tasks to resume seamlessly on new instances after a termination.

Spotify provides a compelling case study. In January 2024, they restructured their recommendation engine training pipeline to rely on AWS Spot instances, reducing their annual machine learning infrastructure costs from $8.2 million to $2.4 million – a 71% savings. By implementing checkpoints every 5 minutes, they navigated the 2-minute termination warnings of p4d.24xlarge instances effectively.

To maximise savings, businesses often maintain a stable base of on-demand or reserved instances for critical tasks while using spot instances for burstable or non-critical workloads.

Storage Tiering Optimisation

AI simplifies storage management by automatically aligning data placement with usage patterns. For instance, it can move infrequently accessed data to lower-cost storage tiers – like from hot to archive – without manual intervention. This approach is particularly useful for organisations that need to retain large amounts of historical data for compliance but rarely access it.

By analysing metadata and access frequency, AI identifies data that hasn’t been touched in weeks or months and migrates it to cheaper storage tiers. This eliminates the need for manual tracking and ensures that storage expenses align with actual usage.

Summary of AI-Driven Techniques and Impact

| Technique | AI Function | Measurable Impact |

|---|---|---|

| Intelligent Rightsizing | Analyses CPU/RAM/IO patterns to suggest optimal instance sizes | Up to 25% savings on EC2/EBS |

| Predictive Scaling | Forecasts load to scale resources ahead of demand spikes | Reduces need for excessive idle capacity |

| Spot Management | Predicts interruption risk to safely use discounted instances | Significant savings over on-demand rates |

| Storage Tiering | Moves data between tiers based on access frequency | Reduces long-term storage expenses |

| Anomaly Detection | Flags spend deviations from historical norms in real-time | Prevents month-end billing surprises |

Digital Fractal Technologies‘ AI Solutions for Cloud Optimization

Implementing AI-driven techniques for cloud optimization isn’t as simple as flipping a switch. It requires deep expertise in cloud architecture and custom software development. That’s where Digital Fractal Technologies, an Alberta-based company, steps in. They specialize in creating tailored AI solutions to cut cloud costs, especially in industries like energy, construction, and public services.

Their process kicks off with an AI Readiness Audit. This audit lays out a 6–12 month roadmap, identifying automation opportunities that promise the highest returns. Let’s explore how they bring these strategies to life with custom tools, workflow automation, and scalable industry solutions.

Custom AI Tools for Real-Time Cost Insights

Digital Fractal doesn’t just make dashboards – they create tools that think. Their philosophy is simple:

"Your mobile and web applications shouldn’t just display data – they should interpret, predict, and act."

Using predictive analytics, computer vision, and natural language processing, they turn raw cloud usage data into actionable insights.

Take Xtreme Oilfield as an example. Digital Fractal developed a mobile and web solution that transformed their trucking operations. According to Regg M. from Operations:

"Digital Fractal Technologies was contracted to digitally transform our trucking operations… [they] digitized our paper forms, automated certificate/permit management, computerized job dispatching, and brought timesheets, vehicle repair and communications to the field."

For another client – a vendor management company in the oil and gas sector – they created an app to track vendor equipment and personnel availability in real time. James M., CEO, shared:

"The app gives our clients real time data to what each vendor on their favourites list has at the ready on a 24 hour basis. Having the ability to book in real time or booking equipment into the future makes both operations seamless."

These tools not only enhance operational transparency but also help prevent unexpected cloud expenses.

Integration of workflow automation with cloud optimization

Digital Fractal takes the insights generated by their AI tools and uses them to streamline operations. They replace repetitive tasks like approvals, scheduling, and invoicing with automated processes that work around the clock. This approach allows businesses to scale without the need to hire more staff.

Their automation workflows are designed for quick implementation – often within 90 days. This speed is crucial because delays in addressing inefficiencies can lead to unnecessary cloud expenses.

One success story is their last-mile delivery system for Deeleeo, launched in October 2020. It included iOS and Android apps for drivers and a web-based admin backend. Jackson P., CEO, highlighted the importance of the team’s post-launch support, which ensured a smooth integration of these AI-driven systems into live cloud environments.

Scalable Solutions for Industry-Specific Needs

Digital Fractal doesn’t believe in one-size-fits-all solutions. They customize their tools to address the challenges specific to each industry, such as legacy systems, complex operations, and strict compliance requirements.

For Alberta’s energy sector or public institutions managing sensitive data, this localized expertise is a game-changer. By tailoring solutions to industry needs, Digital Fractal helps organizations optimize their cloud spending while meeting regulatory demands and operational complexities.

Whether it’s energy, construction, or public services, their solutions are designed to tackle the unique hurdles of each sector head-on.

Implementation Roadmap for Long-Term Cloud Cost Savings

To achieve lasting cloud cost efficiency, follow a four-phase roadmap: visibility, analysis, deployment, and cultural integration. By leveraging real-time AI insights, this structured approach sets the foundation for sustainable savings.

Phase 1: Gaining Visibility into Cloud Usage

Start by understanding where every dollar goes. Tag all resources – like compute instances, GPUs, AI models, endpoints, and even individual customers – to accurately link costs to their sources.

Move beyond just monitoring overall expenses. Track unit metrics such as cost per token, cost per inference, or cost per training epoch. If you’re managing a multi-cloud setup, tools like the FinOps Open Cost and Usage Specification (FOCUS) can help create a unified view across providers.

Once you have a clear picture, you’re ready to dive into spending patterns in the next phase.

Phase 2: Analyzing and Identifying Cost-Saving Opportunities

Now, it’s time to make sense of the data. Use machine learning to establish a "living baseline" that quickly detects unusual spending and forecasts future costs. Real-time anomaly detection has already identified over 5,500 cost issues, saving more than CA$20 billion in cloud expenses.

Dig into unit economics – the cost per user or AI request – to reveal how your spending scales with usage and where inefficiencies lie. Predictive intelligence can also help you gauge the financial impact of system changes before they’re implemented.

With insights in hand, move on to applying optimizations.

Phase 3: Deploying AI-Driven Optimizations

This phase focuses on action. Use AI to automate tasks like auto-scaling, scheduling batch jobs during low-cost periods, and deploying spot instances for non-critical workloads. Adjust resources dynamically, cutting excess capacity based on real-time usage. Many organizations that adopt AI-driven rightsizing see infrastructure costs drop by 30% or more.

For example, predictive autoscaling adjusts resources ahead of expected demand spikes, relying on historical data instead of reacting to current loads. In 2024, Renault Group used Google Cloud’s Active Assist across 140 projects and found that nearly 20% of their Cloud SQL database instances were idle. By acting on these AI recommendations, they eliminated waste without needing custom scripts.

After deploying these strategies, focus on creating a framework for lasting cost control.

Phase 4: Establishing Predictive Controls and Cost Culture

To make savings stick, implement policies like automated approvals, guardrails, and "circuit breakers" to stop runaway jobs. Build a cost-conscious culture by bringing together FinOps, MLOps, SRE, finance, product, and data science teams to share responsibility for cloud expenses.

Treat cost management as an ongoing process, not a one-time effort. Start with a "showback" model to provide visibility into usage without direct billing. Once teams understand their consumption, transition to a "chargeback" model where they are billed for actual usage. This shift makes cloud costs tangible, encouraging teams to take ownership and drive smarter decisions.

| Implementation Phase | Key AI Capability | Primary Benefit |

|---|---|---|

| Phase 1: Visibility | Semantic cost mapping | Linking every token/GPU hour to a business metric |

| Phase 2: Analysis | Pattern recognition | Detecting over-provisioned workloads across large datasets |

| Phase 3: Deployment | Autonomous optimization | Auto-pausing idle resources and rightsizing clusters |

| Phase 4: Culture | Predictive forecasting | Enabling accurate budgets and proactive cost control |

Conclusion

Managing cloud costs has moved far beyond static spreadsheets and periodic reviews. Thanks to real-time AI, organizations can now tackle challenges like over-provisioning, unexpected usage spikes, and the complexities of multi-cloud environments with autonomous, ongoing adjustments. AI agents can quickly identify and resolve issues such as idle GPU fleets, orphaned storage, and inefficient workloads, eliminating waste before it accumulates.

On average, 35% of cloud budgets are wasted on idle infrastructure. AI-driven solutions, however, can reduce costs by 25–35% within the first year. Real-time anomaly detection has already uncovered over CA$20 billion in unnecessary cloud spending across enterprises, and forecast accuracy improves by 23–41% when AI-enabled FinOps replaces traditional methods. These figures highlight the pressing need for a more strategic approach to cloud cost management.

The rise of agentic FinOps – where AI systems can perceive, reason, act, and learn – marks a turning point. This self-regulating infrastructure optimizes performance, ensures compliance, and controls spending without requiring constant human oversight. It’s not just about saving money; it allows engineering teams to focus on innovation while AI handles the day-to-day cost management.

Adopting this proactive strategy reduces expenses and empowers teams to innovate. Digital Fractal Technologies Inc brings these ideas to life with custom AI solutions tailored to your cloud environment and industry. Whether you’re managing multi-cloud setups, optimizing AI workload costs, or automating workflows alongside cloud optimization, their expertise in AI consulting and digital transformation ensures you can achieve measurable results. Their approach follows a four-phase roadmap – from visibility to predictive controls – giving you the tools and confidence to transform your cloud operations.

FAQs

What’s the fastest way to start real-time cloud cost visibility?

The fastest way to gain real-time insight into cloud costs is by using anomaly detection tools that constantly track spending. These tools can spot unusual patterns within minutes, allowing teams to take immediate action. This approach not only helps manage costs more effectively but also avoids wasting resources.

How can AI separate real cost spikes from normal usage growth?

AI pinpoints cost surges by examining usage data in real time through a combination of rules, statistical models, and machine learning. It identifies irregular spending patterns and separates them from expected growth, enabling quick action to manage expenses before they spiral out of control.

Which workloads are safest to automate for cost savings first?

The best workloads to automate for saving on cloud costs are those that are predictable, non-critical, and offer clear opportunities for fine-tuning. For instance, idle containers, underused GPU fleets, and overprovisioned clusters are common culprits of unnecessary spending. These can often be resized or even shut down without affecting essential operations.

Other promising candidates include serverless functions and data warehouses. Their dynamic nature allows for easier adjustments, letting you optimize costs while still delivering strong performance.