Ultimate Guide to AI Testing for Web Apps | Digital Fractal

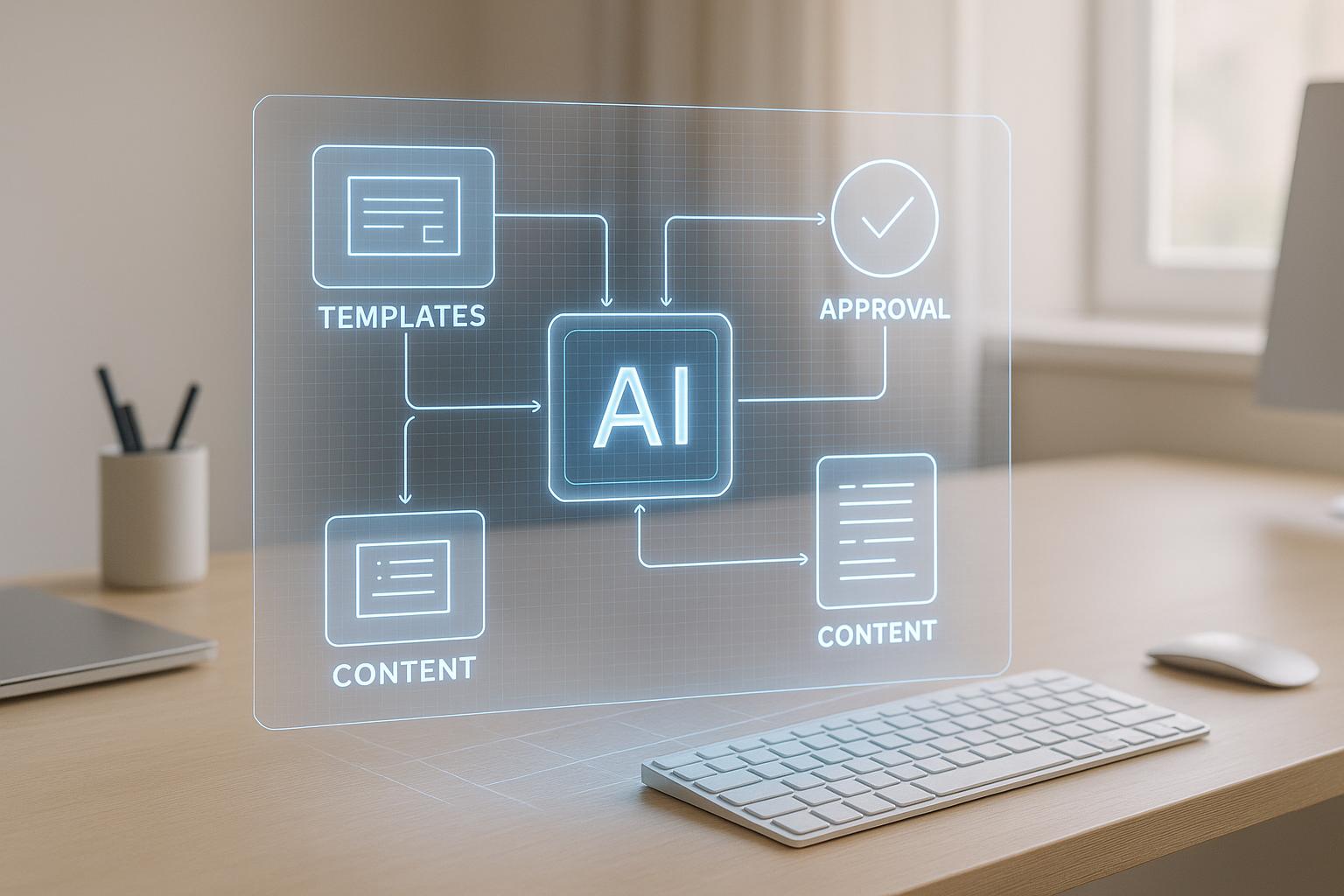

AI testing is transforming how web apps are tested, offering faster execution, reduced maintenance, and smarter defect detection. Unlike manual or traditional automated testing, AI tools use machine learning to create, update, and analyse tests dynamically. This means fewer flaky tests, quicker test cycles, and improved coverage across devices and browsers. Key features include self-healing tests, visual regression analysis, and root cause detection, all designed to save time and resources.

Key Takeaways:

- Self-healing tests: Automatically adjust to UI changes, reducing maintenance.

- Visual regression testing: Detects subtle UI issues using computer vision.

- Faster test cycles: Parallel execution cuts testing time significantly.

- Improved defect detection: AI finds edge cases and integration issues often missed manually.

- Reduced flakiness: Analyses test history to identify and fix unstable tests.

AI testing integrates seamlessly with CI/CD pipelines, supports modern frameworks like React and Angular, and simplifies workflows for QA teams. For Canadian businesses, this approach reduces costs, accelerates release cycles, and ensures higher quality web applications. By combining AI tools with human oversight, teams can achieve reliable results and measurable ROI within months.

Let AI Explore Your Site & Write Tests with Playwright MCP!

Core Capabilities of AI Testing for Web Apps

Building on the speed and adaptability of AI testing, these key features further simplify and enhance quality assurance for web applications.

Automated Test Case Generation and Maintenance

AI tools can scan your web app and generate detailed test cases from simple prompts, covering everything from standard user journeys to edge cases and integration points. For example, you could use a prompt like, "Create test cases for a checkout flow that includes guest users, logged-in users, discount codes, and multiple payment methods" to quickly produce a comprehensive set of tests with minimal manual input. These tools also maintain test cases over time by using advanced smart locator techniques. Instead of relying on static selectors, they identify UI elements using multiple attributes, ensuring tests stay functional even when the UI changes. This allows development teams to spend more time analysing test results rather than constantly updating test scripts. Beyond functional testing, AI also brings improvements to visual validation, which is discussed next.

Visual Regression Testing and UI Validation

AI-powered visual regression testing uses advanced computer vision to ensure your web app’s interface looks as it should while automatically catching visual inconsistencies. Unlike basic screenshot comparisons that can flag false positives, these tools employ algorithms that mimic human vision to identify meaningful differences. For instance, Applitools uses a visual AI engine to detect layout shifts, subtle colour changes, and other issues, while filtering out harmless variations that don’t qualify as defects.

These visual tests can run simultaneously across multiple browsers and devices, ensuring a consistent user experience everywhere. Tools also support multi-baseline A/B variant testing, which allows teams to store multiple reference images for a single test step. This means legitimate UI differences, like those from A/B testing, don’t trigger unnecessary test failures. AI-based image locators further enhance this process by identifying UI elements based on their visual traits, which is especially helpful for testing embedded content or components that lack traditional selectors. But finding visual issues is only part of the equation; AI also digs deeper to address test reliability problems.

Root Cause Analysis and Flakiness Detection

Flaky tests – those that fail or pass unpredictably – can disrupt workflows and erode trust in your test suite. AI tackles this problem by analysing test execution histories to pinpoint patterns of instability and calculate a flakiness score. Failures are automatically categorized (e.g., locator problems, data validation errors, API issues, or environmental factors), providing teams with actionable insights. This detailed analysis helps prioritize fixes and fine-tune testing environments, leading to more reliable tests, faster release cycles, and better overall application quality.

Implementing AI Testing in Your Web Development Workflow

Adding AI testing to your web development process requires thoughtful planning and a step-by-step approach. It’s not something you can implement overnight, but with the right strategy, your team can adopt these tools effectively.

Preparing Your Infrastructure for AI Testing

Before diving into AI testing, make sure your technical setup is ready. Check that your web applications are built on supported technology stacks. Most AI testing platforms work seamlessly with modern frameworks like React, Angular, and Vue.js, as well as traditional web technologies. Also, ensure your infrastructure – whether cloud-based or on-premises – has the resources to handle simultaneous tests across multiple browsers and devices.

Reliable internet connectivity is essential, especially if you’re using cloud-based AI testing services. Evaluate your network’s bandwidth and stability to ensure smooth operations. Additionally, verify that your version control system, like Git or SVN, integrates smoothly with your development environment. This integration is key for automating workflows.

An AI Readiness Audit can help pinpoint areas needing improvement. For example, Digital Fractal Technologies offers audits that assess your current tools, processes, and data. They can identify gaps, such as missing API testing capabilities or insufficient mobile testing support, and provide a clear roadmap for the next 6–12 months. This roadmap outlines where automation and intelligent systems can save time and resources.

Finally, set up a consistent testing environment. Use standard browser versions, device configurations, and test data repositories. This consistency helps AI tools learn patterns effectively and deliver reliable results.

Integrating AI Tools with CI/CD Pipelines

To integrate AI testing into your continuous integration and delivery (CI/CD) pipelines, take a phased approach. Start by selecting tools with built-in CI/CD compatibility. Platforms like Tricentis Testim and Functionize offer direct integrations with systems such as Jenkins, GitLab CI, GitHub Actions, and Azure DevOps.

Here’s how to get started:

- Install the tool’s plugin or agent in your CI/CD environment.

- Use API keys and authentication credentials instead of hardcoding sensitive information into scripts.

- Define triggers for running tests, such as on every commit, with pull requests, or at scheduled intervals.

- Enable parallel execution across browsers and devices to shorten test cycle times.

Set up your pipeline to automatically run AI-generated test cases after code deployments. Include visual regression tests to quickly spot UI changes. Establish clear pass/fail criteria so your team knows what defines a successful test run, and configure notifications to alert developers immediately when tests fail. This creates a feedback loop that speeds up bug fixes and reduces your time-to-market.

Once integrated, AI testing tools can analyse results, categorise failures, and provide actionable insights. This allows developers to focus on coding instead of managing test suites.

Training Teams for AI Testing Adoption

Switching from manual testing to AI-assisted workflows requires targeted training for your team. Start with tool-specific sessions. Many modern platforms feature low-code or no-code interfaces, making it easier for non-programmers to create automated tests using natural language or visual recording. Training exercises might include creating test cases for common scenarios like online checkouts using simple prompts.

Next, ensure your team understands key AI testing concepts. For instance, they should learn how smart locators analyse web page structures and assign confidence scores based on element properties like ID, text, class, and position. They should also grasp how visual AI algorithms detect UI changes and how test flakiness detection identifies unreliable tests. Without this foundational knowledge, troubleshooting and refining test suites can become challenging.

It’s also important to adapt workflows. A "Collect → Understand → Act" framework works well: AI generates test plans, humans execute tests in real-world contexts, and AI analyses feedback to identify patterns. This hybrid approach combines AI’s speed in planning with human judgement for exploratory testing and user experience evaluation.

Create role-specific training paths. QA engineers might need deeper technical knowledge about API testing and maintaining test suites, while business analysts could focus on natural language prompts for generating test cases. Hands-on workshops, starting with simple scenarios and gradually tackling complex workflows, can help reinforce these skills. Designating internal "AI testing advocates" can also provide ongoing support. During the initial months, dedicating 20–30% of your team’s capacity for training and experimentation can ease the transition.

Companies like Digital Fractal Technologies offer expert support to help businesses integrate AI into their workflows. Their consulting services not only customise AI solutions but also enable knowledge sharing by working closely with your team.

Although low-code and no-code platforms may feel challenging at first, the effort pays off. Over time, tests become more reliable, maintenance demands decrease, and your team gains confidence in using AI-powered testing tools effectively.

sbb-itb-fd1fcab

Best Practices for AI Testing in Web Applications

Building on the core capabilities of AI testing discussed earlier, these best practices focus on ensuring precision, managing risks, and supporting growth. Once AI testing becomes part of your workflow, following these strategies can help you get the most out of it while steering clear of common challenges.

Optimizing Test Coverage and Accuracy

To achieve comprehensive test coverage, combine automation with careful planning. AI tools can quickly generate detailed test cases using natural language prompts. For instance, you might instruct your AI testing tool to "Generate test cases for a checkout flow that includes guest users, logged-in users, discount codes, and multiple payment methods." In just minutes, the AI can create structured test cases covering common scenarios, edge cases, and integration points.

Accuracy in AI testing often depends on how web elements are identified. Using smart locators that rely on multiple DOM attributes – such as ID, text, class, position, and element relationships – can strengthen your tests. This multi-attribute strategy ensures that locators remain effective even when UI changes occur, like an element’s position or text being altered.

Incorporate visual regression testing with computer vision to compare screenshots against baseline images. This method can filter out minor, non-critical differences. For even greater accuracy, use multi-baseline A/B variant testing. This approach allows you to store several reference images for a single test step, each representing a valid variation. When the AI captures a UI checkpoint, it compares the screenshot against these baselines and passes the test if it matches any of them.

Running tests in parallel across multiple browsers and devices is another way to ensure thorough coverage without extending testing timelines. When paired with AI-powered element detection, this strategy minimizes test failures and maintains consistency.

Digital Fractal Technologies offers consulting services that can fine-tune your testing strategy. By analysing your workflows and providing tailored AI solutions, they help you achieve broad coverage without unnecessary complexity.

While thorough coverage is important, addressing the risks associated with AI testing is equally essential.

Managing Risks of AI in Testing

AI testing brings many advantages, but it also introduces risks that require careful oversight. A major pitfall is over-reliance on automation. The best approach combines AI capabilities with human expertise. Use AI to generate test cases and identify patterns, but rely on human testers to execute these tests in real-world scenarios. This hybrid model combines AI’s efficiency with the adaptability and contextual understanding human testers bring.

Testing for bias and fairness in AI models is crucial for ethical outcomes. Regularly monitor AI behaviour to detect issues like model drift or unintentional bias. Bias testing should include identifying and addressing biases in AI models to ensure fair outcomes across diverse user groups. Additionally, robustness testing can validate AI’s ability to handle edge cases and adversarial inputs that might reveal biased decision-making.

Transparency is key to building trust in your AI testing system. Use explainability techniques to make AI decisions clear and understandable. This transparency not only helps teams debug issues but also ensures confidence in the AI’s outputs.

Test flakiness is another challenge. AI tools can analyse test execution history to identify patterns of flakiness and calculate a flakiness score. Once identified, investigate root causes, such as timing issues, environmental dependencies, or poorly designed tests. AI-powered failure analysis can classify failed tests and suggest solutions, helping you address these issues effectively.

Maintaining diverse test data is vital to avoid biased learning patterns. Ensure your data represents various demographics, geographies, and use cases. As AI models evolve, continuously update your tests to maintain accuracy and reliability without reinforcing existing biases.

Before scaling AI testing, consider an AI Readiness Audit. Digital Fractal Technologies offers audits that assess your processes, data, tools, and risks. These audits provide a clear roadmap for AI implementation, helping you mitigate risks and plan for long-term success.

Once risks are managed, the next step is to build a testing framework that adapts as your application grows.

Ensuring Maintainability and Scalability

As your web application evolves, your testing framework must grow with it while minimizing manual intervention. Self-healing tests and AI-powered locators can reduce test breakages caused by UI changes. Smart locators that track elements using multiple attributes are particularly effective. Additionally, image-based locators can identify UI elements by their visual appearance, adding another layer of reliability.

Adaptive testing methods that respond to dynamic changes in your application can further reduce test failures. To support these methods, ensure your AI testing platform is compatible with your technology stack. Look for tools that provide SDKs for common programming languages like Java, JavaScript, Python, C#, Ruby, and PHP, and that integrate seamlessly with frameworks such as Selenium, Cypress, Appium, and Playwright.

It’s also important to establish robust monitoring and reporting systems to track AI testing performance. Clear metrics and insights enable data-driven improvements. Secure your testing framework with proper authentication measures, such as API keys, to protect sensitive integrations.

Document your testing strategy thoroughly, outlining when to use AI-generated tests versus manual ones. Create feedback loops for testers to report on AI tool performance and suggest improvements. This documentation becomes invaluable as your team expands.

Treat flaky tests as technical debt that must be resolved to maintain reliability and team confidence. Regularly monitor flakiness metrics and use AI insights to prioritize fixes. By addressing flakiness proactively, you can prevent it from accumulating and ensure your test suite remains dependable over time.

For businesses tackling the challenges of scaling AI testing, Digital Fractal Technologies provides tailored solutions. Their expertise in workflow automation and AI-driven tools helps organizations build flexible testing frameworks that grow alongside their applications, ensuring long-term success without compromising quality.

Measuring Success and ROI of AI Testing

Evaluating the return on investment (ROI) for AI testing is essential to ensure your efforts are paying off. By tracking the right metrics, you can identify what’s working, pinpoint areas for improvement, and justify ongoing investment in AI testing tools.

Key Metrics for Evaluating AI Testing

Start by establishing a baseline over 4–8 weeks. Track the time spent weekly on test creation, execution, and maintenance. Record how long it takes to run your full regression test suite (manual testing often takes 2–5 days), and calculate your defect escape rate by reviewing bugs that reached production over the past 3–6 months.

Once you have this baseline, focus on these metrics:

- Defect detection rate: Compares the percentage of bugs identified by AI versus manual testing. Monitoring this monthly reveals how well the tool catches issues earlier in development.

- Test execution time: Tracks how quickly tests run across browsers and devices. Many organisations see regression testing times drop by 50–70% with AI – what once took 2–3 days might now take just 4–6 hours.

- Test maintenance overhead: Measures the time spent updating and fixing tests. AI tools with self-healing capabilities can reduce this to under 20% of QA team time in mature setups.

- False positive rate: Indicates how often test failures are not actual bugs. This should decline as AI tools refine their detection algorithms.

- Test flakiness metrics: Tracks inconsistent test failures. Industry leaders aim for flakiness rates below 2%, with acceptable rates staying under 5%. Improvements here directly reflect ROI gains.

- Mean time to resolution (MTTR): Monitors how quickly developers address issues flagged by AI testing. AI tools often significantly reduce resolution times compared to manual processes.

In Canada, report these metrics quarterly in CAD and use the YYYY-MM-DD date format. This standardised approach simplifies tracking over time and ensures clear communication with stakeholders.

These metrics provide a framework for measuring both immediate and long-term benefits.

Calculating Long-Term Value and ROI

Determining ROI for AI testing involves a detailed cost-benefit analysis:

- Initial investment costs: Include software licensing fees (ranging from CAD $5,000 to over CAD $50,000 annually, depending on the tool and team size), setup and implementation expenses, and training costs.

- Ongoing operational costs: Cover subscription fees, infrastructure costs for cloud-based platforms, and maintenance expenses.

Savings come from:

- Labour cost reduction: Calculate the hours saved by replacing manual testing. For instance, if your team spent 40 hours weekly on manual testing at CAD $35/hour, that’s CAD $1,400 weekly or CAD $72,800 annually. (Note: Canadian QA engineers earn between CAD $65,000 and CAD $85,000 per year.)

- Defect prevention savings: Bugs caught during testing cost far less to fix than those found in production – up to 100 times less in some cases.

- Faster time-to-market: Shortening release cycles (e.g., from 4 weeks to 2 weeks) accelerates revenue generation by enabling quicker feature rollouts.

- Reduced regression testing time: Saves 30–50% of QA effort, freeing up resources for other tasks.

Use the formula:

(Annual Savings – Annual Costs) ÷ Annual Costs × 100 = ROI percentage.

For example, if annual savings are CAD $150,000 against costs of CAD $40,000, the ROI is 275%. Most organisations achieve positive ROI within 6–12 months of implementation. If ROI takes longer than 24 months, reassess your approach by refining tool selection, training, or test coverage.

Digital Fractal Technologies helps organisations maximise ROI by streamlining workflows, cutting costs, and aligning testing strategies with business goals.

Continuous Improvement and Monitoring

To ensure sustained value from AI testing, establish a dashboard to track both technical and business metrics:

- Test execution velocity and coverage: Monitor daily test execution rates, weekly average execution times, and the growth of your automated test suite. Many organisations report a 40–60% improvement in velocity after adopting AI tools.

- Defect escape rate: Tracks the number of bugs that make it to production, which should decline as your test suite matures.

- Test maintenance cost per test: Expect this to decrease as self-healing capabilities reduce the need for manual updates.

- AI model accuracy for visual regression testing: Regularly evaluate this metric. As computer vision tools learn from your application, accuracy should improve. Re-training models or updating baselines may be necessary if performance plateaus.

- Team productivity metrics: Measure how much time QA engineers spend on strategic tasks like test design versus routine maintenance. Aim to free up 30–40% of QA capacity.

- Cost per defect detected: This figure should drop as your AI testing infrastructure matures and identifies issues earlier in the cycle.

Review these metrics monthly and adjust your strategy as needed. Create feedback loops where testers can report on tool performance and suggest improvements. Joining groups like the Canadian Software Testing Board or attending regional QA conferences can also provide valuable benchmarking data.

By consistently monitoring these metrics, your AI testing framework can adapt to evolving development needs.

For organisations looking to fully optimise their AI testing efforts, Digital Fractal Technologies offers ongoing support and monitoring services. As noted by clients:

James M., CEO, shared: "The team’s support after the development stage is unmatched, they are quick to react at such a critical time."

Jackson P., CEO, added: "They continue to make sure we are a satisfied customer of theirs. We no longer need to look elsewhere because we know we are getting the best price and service from them."

This kind of partnership ensures your AI testing implementation evolves effectively, delivering consistent value over time.

FAQs

How does AI testing help reduce test maintenance compared to traditional methods?

AI testing simplifies test maintenance by using machine learning algorithms to adapt to changes in web applications. Traditional testing methods often demand manual updates to test scripts whenever there are updates to the user interface or functionality. In contrast, AI-powered tools can automatically detect these changes and adjust accordingly, reducing the need for manual intervention.

With features like predictive analytics and self-healing capabilities, AI testing keeps test cases up-to-date and effective over time. This approach not only cuts down on time and effort but also boosts the accuracy and dependability of testing. As a result, teams can dedicate more energy to improving the overall quality of their web applications.

How can I prepare my infrastructure for integrating AI testing tools into my web applications?

To get your infrastructure ready for AI testing tools, start by assessing your current systems. Make sure they can handle the heavy computational needs that come with AI-driven tasks. You might need to upgrade your servers, storage, or network to manage large data volumes and the necessary processing power.

The next step is to put a strong data management plan in place. Since AI testing tools depend on high-quality data, your datasets should be well-organized, secure, and meet Canadian data privacy standards.

Lastly, set up workflows that support automation and ensure your team has the skills to use AI tools effectively. This will simplify the implementation process and help you get the most out of your AI testing efforts.

How can I evaluate the ROI of using AI testing in web app development?

To evaluate the ROI of using AI testing in your web development process, start by pinpointing key metrics. These might include shorter testing cycles, higher defect detection rates, and better overall application performance. Once you have these benchmarks, compare the benefits to the costs of adopting AI tools – things like software licences, training, and setup expenses.

For instance, calculate how much time AI testing saves and convert that into monetary terms, whether by using hourly rates or project deadlines. Don’t forget to account for long-term advantages, such as fewer issues after launch and happier users, which can boost revenue or cut operational costs. Weighing these elements will give you a clearer picture of the real value AI testing adds to your development process.