How AI Detects Behavioural Threats

AI is transforming cybersecurity by identifying threats that traditional tools often miss. Instead of relying on known malware signatures, AI monitors user, device, and network behaviour to detect anomalies. This approach is critical as 79% of cyber threats in 2024 were malware-free, relying on stolen credentials or legitimate tools. For example, Business Email Compromise (BEC) caused $2.77 billion in losses, often bypassing traditional defences.

Key takeaways:

- Baseline Behaviour: AI learns "normal" activity patterns over 60–90 days, adjusting to changes in roles or workflows.

- Real-Time Detection: AI processes data in milliseconds, identifying risks like unusual logins or data spikes during active sessions.

- Risk Scoring: Machine learning assigns risk levels to anomalies, combining supervised (known threats) and unsupervised (machine learning models for zero-day detection).

- Automated Response: Immediate actions, like disabling accounts or isolating devices, prevent attackers from spreading within networks.

- Human-AI Collaboration: Analysts validate AI findings, adding context to refine detection and reduce false positives.

With attackers moving faster than ever – lateral movements can happen in 48 minutes – AI’s ability to detect and respond in real time is essential for protecting organizations. By combining AI’s speed with human expertise, companies can minimize breaches and save millions in potential losses.

4-Step AI Behavioral Threat Detection Process: From Baseline to Response

AI powered threat detection system in Cybersecurity l HandE Learning l HEProAI

sbb-itb-fd1fcab

Step 1: Creating Baseline Behaviour Profiles

To start, AI systems need to establish a clear picture of what "normal" looks like. This involves creating baseline behaviour profiles – dynamic models that reflect typical user, device, and application activity. Unlike static "if-then" rules that can quickly become outdated, these profiles evolve as AI continuously monitors activity across networks, cloud platforms, and SaaS applications. This ensures the system stays aligned with changing roles and responsibilities over time. Below, we’ll explore how data is collected and why historical context is key to building accurate baselines.

Data Collection and Analysis

AI systems rely on a wide range of data to craft these baselines, pulling information from authentication logs, file activity, communication patterns, and network traffic. The more comprehensive the dataset, the better the system becomes at detecting irregularities. For example, AI might observe that a finance manager typically logs in during business hours in Toronto and accesses specific databases. Over time, this behaviour forms a "known good" baseline.

"Instead of telling a system what’s suspicious, you let it learn what normal looks like. Then anything that deviates stands out automatically."

- Pistachio Cybersecurity Protection

AI also compares individual behaviours to those of peers in similar roles. This additional layer of analysis helps distinguish between routine actions and genuine anomalies.

Why Historical Data Matters

Building reliable baselines takes time. Most systems require a training period of 60 to 90 days to account for business cycles, seasonal patterns, and changing responsibilities. A shorter observation period, like 30 days, may miss critical variations such as monthly reporting spikes or quarterly audits, increasing the likelihood of false positives. For instance, the system learns to recognize patterns like an accounting team downloading more records at month-end or DevOps engineers accessing production systems during scheduled deployments. These are normal activities, not threats.

As organisations evolve – through role changes, new tools, or updated workflows – the baselines adjust automatically. This ensures the AI can consistently separate routine operational changes from real risks, keeping detection accurate and relevant.

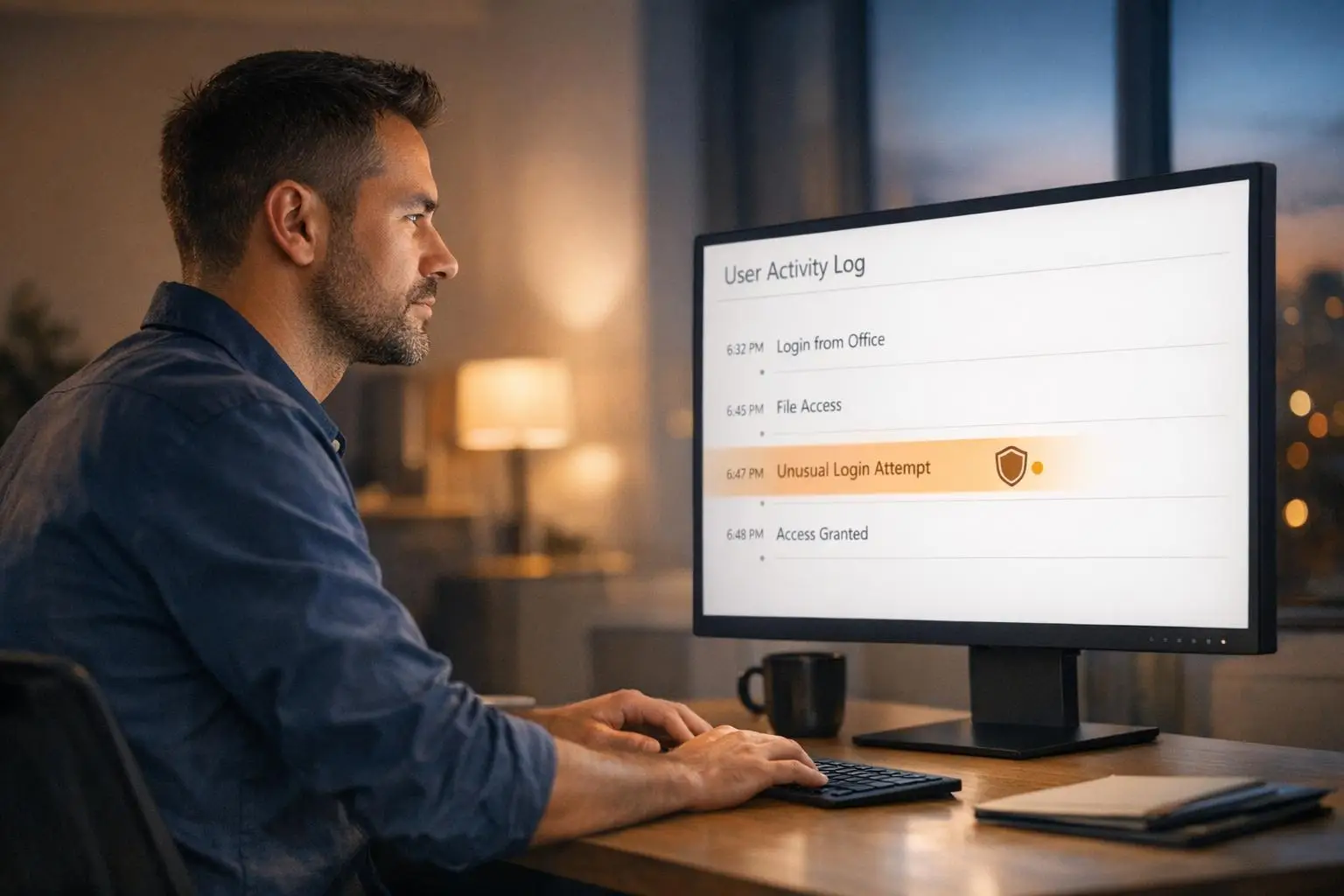

Step 2: Real-Time Monitoring for Anomalies

After baselines are set, AI systems move into continuous monitoring mode, keeping a close eye on activity in real time. This isn’t like batch processing, where data is reviewed after the fact. Instead, real-time detection identifies threats as they happen, during active sessions. The downside of delayed detection? It can leave room for serious issues like data theft, fraudulent transactions, or unauthorised lateral access. Modern AI platforms are designed to process incoming signals in milliseconds, comparing actions against established norms and flagging anything unusual before it grows into a larger problem.

To make this possible, the system relies on low-latency processing, which adds just 50 milliseconds per request. Using API-native integrations, these platforms continuously pull in data like authentication events, mailbox activity, and device telemetry. Rather than treating log entries as isolated events, AI connects related signals – like a new device registration paired with an immediate mailbox rule change – into a single risk assessment. This approach has proven effective, catching 34% more threats that traditional bot-scoring systems would have missed.

Signals and Metrics Monitored

AI focuses on specific signals to detect deviations with precision. These signals fall into four main categories: identity, communication, network, and systems.

- On the identity front, AI examines login frequency, device fingerprints, IP reputation, and session telemetry to spot account takeovers.

- For communication, it looks at sender-receiver relationships, message urgency, language patterns suggesting financial requests, and changes to mailbox rules.

- Network monitoring includes analysing HTTP/2 fingerprints, Client Hello extensions, JA4 fingerprints, and traffic volume.

- At the system level, metrics like data access patterns, working hours, geographic anomalies (like impossible travel scenarios), OAuth permission grants, and API call velocity are tracked.

The stakes are high: in 2025, businesses globally lost an average of 7.7% of their annual revenue to fraud, amounting to an estimated $534 billion. Businesses can use a workflow automation savings estimator to see how much they could save by modernizing these processes. This underscores the importance of thorough signal monitoring.

Real-Time Processing Architecture

Using the established baselines, AI systems employ parallel processing to evaluate incoming data continuously. Modern platforms create tailored behavioural models for each customer, factoring in specific traffic patterns, seasonal trends, and legitimate business fluctuations. This customised approach significantly reduces false positives that arise with one-size-fits-all global thresholds.

"Effective defence depends on detecting identity misuse after authentication succeeds, not simply blocking known-bad indicators."

- David van Schravendijk, Abnormal AI

Instead of relying on global averages, systems compare activity to context-specific baselines. For instance, a database administrator working on production systems at 2:00 a.m. might make sense during a scheduled maintenance period but could raise alarms on a random weekday. AI evaluates these nuances in real time, combining multiple data points into a single risk score. If the score crosses a certain threshold, automated responses are triggered immediately.

Step 3: Detecting Anomalies and Scoring Risk

After setting baselines and implementing real-time monitoring, the next step is risk scoring. This process takes raw data signals and transforms them into actionable insights. Machine learning models evaluate these patterns, assigning risk scores to distinguish between genuine threats and harmless anomalies.

AI systems rely on both supervised and unsupervised learning to handle this task. Supervised models are trained using labelled data, meaning they learn from past examples of acceptable and suspicious behaviour. These models are particularly effective at identifying known threats, such as malware or phishing attacks. On the other hand, unsupervised models work without labelled datasets. They identify new patterns on their own, making them especially useful for detecting previously unknown threats, like "zero-day" vulnerabilities.

Risk scoring combines multiple signals into a single numerical value, often ranging from 0 to 100. Signals with a higher impact, such as unauthorised transactions, weigh more heavily on the score than less critical ones, like off-hours logins. For example, a login attempt at 3:00 a.m. might initially seem suspicious. However, if the user is a night-shift worker, the system’s contextual awareness adjusts the score accordingly. This approach helps distinguish between isolated anomalies and contextually relevant deviations. Below, we break down how supervised and unsupervised models work together to reduce noise and identify meaningful risks.

Supervised and Unsupervised Learning Models

Supervised learning uses algorithms like Support Vector Machines (SVM), Random Forest, and Gradient Boosting. For instance, from April 2020 to September 2024, a team at Housing, Infrastructure and Communities Canada (HICC), led by Stany Nzobonimpa and Mohamed Abou Hamed, applied a Gradient Boosting algorithm to monitor data from 44 bridge sensors. This system flagged 714,185 anomalous readings – about 4.6% of the total data – and achieved an F1 accuracy score of 0.956 (96%). It was particularly effective at detecting "flatline" anomalies, where sensors reported identical readings repeatedly, a type of issue that traditional threshold-based alarms might overlook.

Unsupervised models, such as Isolation Forest, K-means Clustering, and DBSCAN, operate differently. For example, Isolation Forest isolates anomalies by randomly partitioning data, where anomalies tend to have shorter path lengths in decision trees. K-means groups data into clusters, flagging points far from cluster centres as unusual. These models are excellent for spotting insider threats and novel attack patterns, even without prior labels.

Reducing Alert Noise

Once risk scores are assigned, AI systems refine their detection capabilities by reducing unnecessary alerts, or "noise." This is achieved through techniques like adaptive baselining, which continuously updates the definition of "normal" based on factors such as seasonal trends, new hires, or evolving business activities. For example, a spike in data exports during quarterly financial reporting is a predictable pattern. Over time, the system learns this behaviour and stops flagging it as suspicious.

Another useful method is peer group analysis. Instead of comparing a user’s actions to a static baseline, the system evaluates them against a group of similar profiles, such as colleagues in the same department or users with comparable access levels. This approach helps identify true outliers while ignoring normal variations within specific groups. As Steve Moore, Vice President and Chief Security Strategist at Exabeam, explains:

"Utilize hybrid detection methods: Combine multiple detection methods, such as statistical, machine learning, and rule-based approaches, to enhance accuracy."

Step 4: Automated Response and Containment

When AI systems detect a high-confidence threat, immediate action is non-negotiable. With the fastest recorded breakout taking just 51 seconds, there’s no time for manual intervention. AI-powered systems step in to contain threats within seconds, drastically reducing opportunities for data theft or lateral movement.

Modern attackers often bypass traditional malware, opting instead for legitimate tools and stolen credentials. As Kevin Mata from Swimlane puts it:

"AI helps identify threats; automation ensures they’re handled swiftly and effectively".

By automating threat responses, organisations save an average of 1,454 hours annually in manual labour and shorten breach lifecycles by about 80 days.

Examples of Automated Actions

AI systems respond to specific threats with tailored containment measures. Here are some examples:

- Compromised credentials (e.g., impossible travel patterns, privilege escalation): Accounts are disabled, or credentials revoked immediately.

- Lateral movement or ransomware indicators (e.g., mass encryption): Infected endpoints are isolated, or cloud instances quarantined.

- Fraudulent login patterns or unauthorised data transfers: Suspicious activities or transactions are blocked.

- Medium-risk anomalies (e.g., unusual login locations, new device registrations): Users are prompted for multi-factor authentication (MFA).

- Command-and-control communications or API abuse: Malicious IPs are blocked, or OAuth tokens revoked.

Since September 2024, Microsoft has strengthened its defences by adding over 200 detections targeting top attacker tactics across its systems, further enhancing automated responses.

Preventing Lateral Movement

Beyond stopping individual threats, automated responses also play a critical role in preventing lateral movement within networks. AI monitors internal traffic to detect and halt the spread of an attack.

By focusing on "east-west" traffic – internal system communications often overlooked by traditional firewalls – AI can spot unusual activity. For instance, if an HR database is accessed by a system in Engineering without a valid reason, behavioural AI flags the anomaly and blocks peer-to-peer connections between workstations.

This is crucial because 84% of severe breaches stem from "living-off-the-land" (LOTL) attacks, where attackers use legitimate tools and credentials to blend in with normal operations. By identifying abnormal authentication patterns or traffic spikes in real time, AI isolates compromised systems before attackers can establish a foothold. As Microsoft highlights:

"Real-time defence: ML models spot threats in seconds, reducing the attack window".

With this rapid containment, even the fastest attacks – capable of exfiltrating data in just 72 minutes – can be stopped before causing major damage.

Continuous Improvement of AI Detection Systems

Automated responses are excellent at quickly containing threats, but they’re only part of the picture. To stay effective, AI detection systems need constant updates. Threat detection isn’t a “set it and forget it” process – attackers are always refining their methods, and detection systems must keep pace. Without regular updates, even the most advanced models can suffer from concept drift, where their ability to identify threats weakens over time. Continuous refinement ensures these systems remain effective in both the short and long term.

Feedback Loops and Retraining Pipelines

AI detection systems thrive on feedback. Security analysts play a crucial role by reviewing alerts, confirming real threats, and flagging false positives. This feedback is fed back into the system, helping to fine-tune the models. The process involves analysing logs and endpoints, identifying new threat patterns, and retraining the models with updated data sets. Before deploying these updates, performance validation ensures the changes improve accuracy.

The Hybrid Drift Detection and Adaptation Framework (HDDAF) showcases how adaptive pipelines can work. By balancing minor updates with full retraining when data changes significantly, HDDAF achieved a macro F1 score exceeding 99% on the CIC-IDS2017 dataset.

"Each confirmed attack teaches the models more about how that threat behaves, and that learning improves threat detection across every environment" – Piotr Wojtyla, Abnormal AI

Monitoring Performance Metrics

After updating models, tracking performance metrics is essential to ensure they remain effective. Key metrics include precision, which measures the percentage of accurate threat detections, and recall, which tracks how many true threats are identified. The F1 score combines these metrics to give a balanced view of accuracy. Operational metrics like Mean Time to Detect (MTTD) and Mean Time to Respond (MTTR) are also critical, as they reveal how quickly the system identifies and addresses threats.

Real-time monitoring adds another layer of security by detecting anomalies like infinite loops or context loss. When such issues arise, immediate interventions – like resetting sessions – can prevent further problems. Tailored baselines for individual sessions also help by defining “normal” behaviour on a case-by-case basis, reducing false positives in varied environments. For example, organisations using AI and automation in 2025 saved an average of $1.9 million per breach and shortened breach lifecycles by 80 days compared to those without such defences.

The Role of Human Expertise in AI Threat Detection

AI can process massive amounts of data and flag potential threats faster than any human could. But when it comes to making sense of those findings, human expertise is still irreplaceable.

While AI is excellent at spotting anomalies, it doesn’t always understand context. For example, AI might flag a login from an unusual location as suspicious, but a human analyst could recognize it as part of a CFO’s travel schedule or a department’s routine end-of-quarter activity. This ability to interpret and contextualize data ensures that genuine threats are addressed while false alarms are minimized.

The best results come when AI and security teams work together. AI handles the heavy lifting – processing vast amounts of data and identifying patterns – while humans focus on judgement-based decisions. Human oversight also ensures that AI’s reasoning is clear and that critical alerts aren’t dismissed without proper evaluation. As Chandrodaya Prasad, Chief Product Officer at SonicWall, explains:

"Explainability isn’t a nice-to-have. It’s the foundation of human-AI collaboration in security".

Collaboration Between AI and Security Teams

Modern Security Operations Centres (SOCs) now depend on AI to streamline their workflows. Instead of spending 15 minutes gathering data and navigating multiple platforms for a single alert, analysts can now review complete investigation packages generated by AI. These packages integrate data, highlight patterns, and provide analysts with the tools they need to validate findings and assess risks.

AI systems assign confidence scores to alerts, ranging from 0.0 to 1.0, helping analysts prioritize their efforts. For instance, a high-confidence alert (like 0.9) might demand immediate attention, while lower scores may require deeper investigation. Tools like SHAP (Shapley Additive Explanations) further assist by breaking down the factors – such as geolocation, file size, or timing – that contributed to a particular risk score. This transparency helps analysts understand why AI flagged certain behaviours, making it easier to decide whether the threat is real.

Feedback from analysts also plays a critical role in improving AI’s accuracy. By adding context that AI alone can’t interpret, analysts refine detection logic over time. Ajmal Kohgadai, Director of Product Marketing at Prophet Security, highlights this dynamic:

"The analyst retains ownership of the judgment. The analyst reviews the investigation package prepared by the AI, validates the findings, and makes the risk decision".

This collaboration strengthens threat detection systems, blending automated efficiency with human insight.

Improving Interpretability of AI Models

For AI to truly assist analysts, its decisions need to be clear and actionable. Explainable AI bridges this gap by showing the reasoning behind each alert. Tools like LIME (Local Interpretable Model-Agnostic Explanations) simplify complex AI models into understandable rules, allowing analysts to validate findings with confidence.

Human feedback also reduces false positives by fine-tuning detection systems. For instance, analysts can add exceptions for legitimate activities, like mobile app retries or scheduled maintenance, that might otherwise trigger alerts. This "editor mode" approach ensures that AI systems align with the operational realities they’re monitoring.

As AI systems grow more capable – sometimes even recommending or initiating actions – human oversight becomes even more critical. Alex Vourkoutiotis, CTO at ECAM, underscores this importance:

"The human agent as part of that loop is important to verify when you have low risk stratification as an output from AI that needs human attention".

For decisions with significant consequences, such as automated access control or remediation, human accountability remains the safeguard against potentially disastrous errors.

Practical Considerations for Implementing AI Threat Detection

Deploying AI for behavioural threat detection requires a strong technical setup and careful adjustments to minimize false alarms while identifying genuine threats.

Technical Requirements for Implementation

To effectively use AI for threat detection, your system must handle data from multiple sources, including firewalls, endpoint devices, network traffic, and cloud services. This data needs to be normalized and filtered to eliminate duplicates and inconsistencies before analysis. Ensure your infrastructure has enough processing power and storage to support both real-time analysis and the creation of historical baselines.

Leveraging advanced tools like Transformer models for sequential data analysis and graph-based systems to map multi-cloud relationships is essential. Projections suggest that by 2026, 40% of multicloud environments will incorporate generative AI to enhance security and identity access management.

For seamless operations, AI tools should integrate with existing SIEM, SOAR, and EDR/XDR platforms using APIs. This ensures unified monitoring and supports continuous anomaly detection, as discussed earlier. Such integrations enable real-time monitoring and automated responses to work together across your security framework.

During the initial rollout, allow the AI system a learning period of 7 to 30 days to establish normal behaviour patterns before activating automated blocking. This step helps reduce false positives during early implementation. As Lucia Stanham, Product Marketing Manager at CrowdStrike, points out:

"The performance of an AI system is directly tied to the quality and volume of the data it is trained on. Inadequate or biased data can lead to poor threat detection".

These technical steps lay the groundwork for reducing false positives, which is critical for effective threat detection.

Strategies to Reduce False Positives

Once the technical setup is in place, the next challenge is improving detection accuracy. False positives can overwhelm security teams, making it harder to identify real threats. One way to address this is by using session-specific baselines instead of global thresholds. For example, a developer working late at 2:00 a.m. might be routine, but the same activity from an HR manager could indicate a potential issue. Establishing baselines using the mean and standard deviation from the first 10 session interactions can help fine-tune detection.

Another approach is weighted scoring, which combines multiple weak signals – like slight increases in latency or minor shifts in behaviour – into a single confidence score. This method aligns with earlier strategies for reducing noise in anomaly detection. Steve Moore, Vice President and Chief Security Strategist at Exabeam, advises:

"Utilize hybrid detection methods: Combine multiple detection methods, such as statistical, machine learning, and rule-based approaches, to enhance accuracy".

Explainable AI tools like SHAP (Shapley Additive Explanations) or LIME are invaluable for understanding why the system flagged certain behaviours. These tools provide transparency, enabling security teams to quickly validate alerts and adjust detection criteria when necessary.

Finally, incorporating feedback from analysts who validate or dismiss anomalies helps the AI model refine its weighted scoring and detection parameters. While real-time behavioural detection adds about 50 milliseconds of latency per request, this is a small price to pay for preventing costly automated attacks.

Conclusion

AI-powered behavioural threat detection is transforming cybersecurity by identifying zero-day exploits, credential abuse, and social engineering tactics. By analysing deviations from normal behaviour, it catches threats that traditional, signature-based tools often miss. This approach is essential, especially when 79% of security detections now involve malware-free threats, and attackers can move laterally within just 48 minutes.

To truly tackle modern cyber threats, AI must work hand-in-hand with human expertise. Together, they process events, validate alerts, and refine detection models through ongoing feedback. This collaboration significantly reduces breach lifecycles – by up to 80 days – and saves organizations an average of $1.9 million per breach. As Palo Alto Networks puts it:

"It’s not man versus machine; it’s man plus machine, working in sync to outpace cyber adversaries".

Adaptability is key for AI systems, as both organizational environments and attacker tactics evolve. A prime example is Interac‘s fraud detection program, which achieved a 300% increase in identifying fraudulent transactions within its first year by combining AI insights with human oversight. Fahad Shaikh, Leader of Data & Analytics Strategy at Interac, highlights this synergy:

"AI allows us to process and analyze data at a scale that would be impossible manually. We can detect patterns as they emerge, allowing us to act quickly and effectively".

The success of AI in cybersecurity hinges on more than just the technology itself – it requires precise implementation. This includes clean data pipelines, seamless integration with existing security platforms, and a 60–90 day baselining period to establish accurate behaviour profiles. Each step, from creating baselines and monitoring in real time to risk scoring and automated responses, builds toward a comprehensive AI-driven defence strategy. This proactive approach detects threats earlier in the attack chain, minimizing damage before it can escalate.

This shift – from focusing on "known bad" indicators to understanding "known good" behaviour – marks a fundamental change in cybersecurity strategy. Companies like Digital Fractal Technologies Inc are leading the charge, delivering tailored AI solutions that meet the unique challenges of Canada’s cybersecurity landscape.

FAQs

What data does AI use to learn “normal” behaviour?

AI systems learn to recognise "normal" behaviour by studying patterns in data like communication flows, user activities, and system interactions. These patterns create a baseline of expected behaviour. The system then keeps an eye on ongoing activity, comparing it to this baseline. Any deviations or unusual patterns can trigger alerts, helping to pinpoint potential threats or irregularities in real time.

How does AI score risk and cut false positives?

AI improves the accuracy of behavioural threat detection by cutting down false positives through a combination of layered risk scoring and anomaly detection. It examines irregularities in identity, access, and user interactions, assigning risk scores based on potentially suspicious behaviours. Unlike traditional static rule-based systems, behavioural AI constantly analyses user patterns and identifies deviations. This dynamic approach helps it focus on truly abnormal activities, effectively distinguishing real threats from harmless inconsistencies, leading to fewer false alarms and better precision.

What happens when AI detects a high-risk anomaly?

When AI detects a high-risk anomaly, it promptly sends out alerts and kicks off automated actions to tackle potential threats. These actions can range from initiating deeper investigations to implementing containment strategies or other steps aimed at maintaining security and reducing risks.