Designing Chatbots with Emotional Intelligence | Digital Fractal, Edmonton, AB

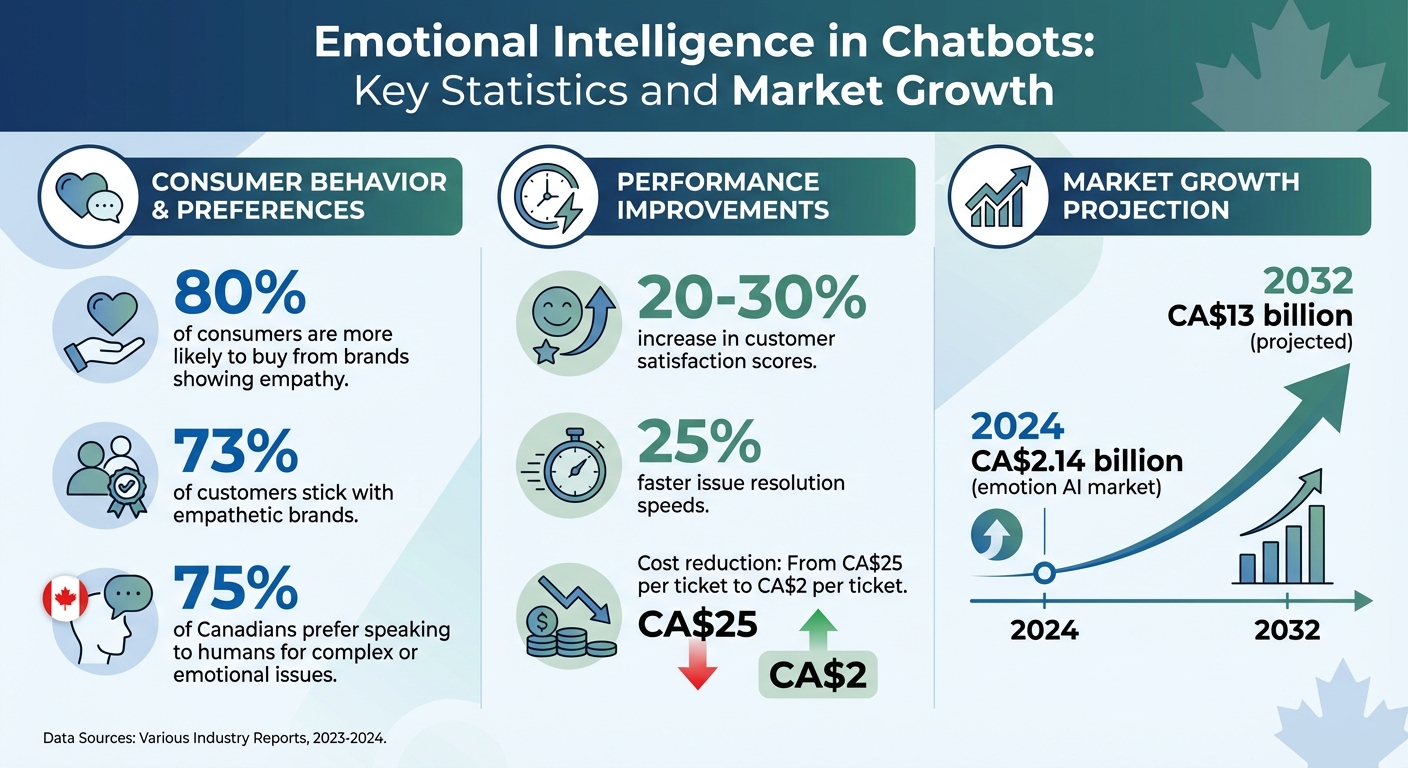

Chatbots often fail to handle emotions effectively, leaving users dissatisfied. For Canadian businesses, this can mean lost revenue and weaker customer loyalty. Studies show that 80% of consumers are more likely to buy from brands showing empathy, while 73% stick with empathetic brands. Yet, 75% of Canadians prefer speaking to humans for complex or emotional issues.

Emotionally aware chatbots solve this by analysing tone, sentiment, and context to respond appropriately. This approach improves customer satisfaction by 20–30% and speeds up issue resolution by 25%. The global emotion AI market is projected to grow from CA$2.14 billion in 2024 to nearly CA$13 billion by 2032, reflecting rising demand. However, building these systems requires AI consulting services for advanced sentiment analysis, context tracking, and strict adherence to privacy laws.

This article explores how businesses can create emotionally aware chatbots that acknowledge emotions, handle uncertainty, and comply with Canadian privacy regulations. These tools not only enhance user experience but also reduce costs and improve efficiency.

Emotional Intelligence in Chatbots: Key Statistics and Market Growth

Problems with Standard Chatbots

Missing Emotional Recognition

Most standard chatbots operate on rigid, rule-based keyword matching. This approach ignores the emotional context of a conversation, often leading to responses that feel disconnected or tone-deaf. For instance, a bot might pick up on the word "frustrated" but fail to understand the reason behind the frustration or adjust its tone accordingly.

This results in what experts call "emotional avoidance", where bots respond factually to emotional statements without addressing the underlying sentiment. Imagine a user saying, "I feel empty", and the bot replying, "That’s fine! Let’s plan a goal for tomorrow!" – a response that completely misses the mark. Standard chatbots also struggle with subtleties like sarcasm, humour, or regional nuances, which can dramatically change the meaning of a message.

Another significant issue is poor contextual memory. These systems often fail to track emotional patterns across interactions, making it impossible to recognize when a user’s frustration is building. This lack of emotional insight not only frustrates users but also damages trust and overall satisfaction.

Effects on User Satisfaction and Retention

The inability to recognize and respond to emotions has a direct impact on user trust, satisfaction, and loyalty. When chatbots fail to acknowledge emotions appropriately, they risk alienating users. This frustration is further compounded when bots can’t identify critical moments that require escalation to a human agent, especially during crises.

On the flip side, emotionally intelligent interactions have been shown to boost customer satisfaction scores by 20–30% in industries focused on customer experience. As the WTMF Team aptly puts it, "Most chatbots aren’t heartless, they just lack emotion". For users, poorly handled interactions can lead to reduced loyalty, lower retention rates, and a tarnished brand reputation – consequences that Canadian businesses, competing in an increasingly digital marketplace, can ill afford. Before investing, businesses should evaluate their digital transformation readiness to ensure their infrastructure can support advanced AI features.

sbb-itb-fd1fcab

First emotionally intelligent AI | Meet Hume’s Empathic Voice Interface | Human-like conversations

Core Elements of Emotionally Intelligent Chatbots

Creating an emotionally intelligent chatbot involves combining sentiment analysis, adaptive responses, and context awareness. These elements work together to make interactions more meaningful and human-like, starting with the ability to interpret emotions.

Sentiment Analysis and Emotion Detection

The cornerstone of emotional intelligence in chatbots is their ability to understand what users are feeling. Advanced systems go beyond simple keyword detection, incorporating text sentiment analysis, vocal tone recognition (like pitch and pace), and even facial expressions to grasp emotional nuances.

The process includes analyzing text for sentiment, tracking speech patterns, and monitoring emotional changes over time. Custom Transformer models, capable of processing multiple emotional states simultaneously, can identify dozens of distinct emotions in real-time. These models rely on structured inputs that pair emotion labels with user messages and contextual details.

For example, Woebot Health uses sentiment analysis and context tracking to deliver tailored exercises for managing stress and anxiety, focusing on identifying emotional distress. Similarly, Replika, an AI companion, employs generative models trained on empathetic language to help users feel understood. Both systems utilize the EmpatheticDialogues dataset, which includes over 69,000 human conversations categorized into 32 emotions.

However, achieving real-time emotional understanding requires robust hardware. High-performance GPUs with at least 24GB of VRAM are essential to keep response times under one second. For Canadian businesses exploring this technology, implementing memory systems to track emotional patterns – such as shifts from anxiety to frustration – can enhance user experience across multiple interactions.

Adaptive Response Generation

Adaptive response generation ensures the chatbot’s tone and language align with the user’s emotional state. This approach shifts the focus from simply retrieving information to building a meaningful connection, addressing a common frustration with traditional chatbots. Instead of offering generic replies, these systems validate emotions first before addressing the issue.

Microsoft XiaoIce is a prime example. Its "empathetic computing module" analyses the user’s emotions and adjusts the tone of the conversation, fostering long-term engagement by making users feel heard and supported. As AI researcher Muhammad Hasnain explains:

"Empathy can be engineered – not as emotion, but as design".

Moreover, advanced systems can escalate interactions to human agents when frustration levels surpass predefined thresholds or when specific safety keywords arise. This approach is increasingly vital, as 73% of customers remain loyal to brands that demonstrate empathy in their support.

Context Awareness and Personalization

Without context, even the most advanced sentiment analysis can fall short. Context awareness prevents chatbots from offering generic or repetitive responses, which can quickly disengage users. By understanding the reasons behind user messages, chatbots can shift from task-oriented interactions to meaningful conversations.

This capability builds on sentiment detection and adaptive responses, with personalization adding the final touch. For instance, memory networks can track long-term emotional trends, recalling that a user who was anxious last week now appears "flat". This level of emotional memory makes the chatbot feel more like a thoughtful assistant and less like a cold tool.

Personalization also involves situational awareness – knowing when automation suffices and when human intervention is better suited. Since nearly 40% of social media interactions are emotionally charged rather than purely informational, chatbots must be designed to recognize these moments and respond appropriately. Using RAG (Retrieval-Augmented Generation) to pull live, verified information ensures responses remain accurate and relevant.

The benefits are clear. Emotionally aware interactions can boost customer satisfaction scores by 20% to 30% in service-focused industries. Additionally, businesses using AI-driven emotional analytics can resolve issues up to 25% faster. As the WTMF Team aptly puts it:

"Teaching a chatbot conversational AI to recognise emotion isn’t about making machines feel; it’s about helping people feel seen".

Creating Emotionally Responsive Conversation Flows

Building on tools like sentiment analysis, context awareness, and adaptive responses, crafting responsive conversation flows can transform chatbot interactions. To achieve this, chatbots must simulate natural, empathetic dialogue. Their ability to interpret emotional cues and handle uncertainty often determines whether users leave feeling frustrated or loyal.

Adding Empathy to Conversations

Empathy in chatbot design isn’t about making machines "feel" emotions – it’s about ensuring users feel understood. A critical first step is acknowledging emotions before jumping to problem-solving. For instance, if a user expresses frustration, a chatbot should validate that feeling with statements like, "I understand this is frustrating," before offering solutions.

Another effective technique is progressive disclosure. Instead of bombarding users with a series of questions, chatbots can gather information gradually, mimicking the flow of a natural conversation. Features like typing indicators and brief pauses between responses can also make interactions feel less mechanical.

Consistency in the chatbot’s personality plays a significant role in building trust. Whether the tone is professional and calm or friendly and supportive, keeping it steady helps users feel comfortable. As the WTMF Team explains:

"When you say ‘I’m tired of trying,’ the bot doesn’t say ‘Take a break.’ It says, ‘That sounds really hard. Want to talk more?’"

These empathetic approaches set the stage for managing more complex scenarios, where uncertainty or the need for escalation may arise.

Handling Uncertainty and Escalations

Even the best-designed chatbots will encounter situations they can’t fully resolve. How they handle these moments can make or break the user experience. A clarification cascade – offering a set of likely options, then narrowing choices – can help guide users before escalating to human support.

Graceful degradation is another critical strategy. This involves creating a fallback system where the chatbot transitions from AI consulting and machine learning solutions to simpler rule-based solutions, and finally to human assistance if needed. This ensures users are never left without help. For high-stakes actions, like processing refunds or deleting accounts, adding a confirmation step helps verify intent and avoid mistakes.

Smart escalation triggers are also vital. A chatbot should seamlessly hand off to a human agent when it detects high frustration levels, crisis-related keywords (e.g., health or finance concerns), or repeated failed attempts to resolve an issue. As Dheeraj Kumar from Ramam Tech highlights:

"To build an emotionally intelligent chatbot is not to replace humans – it is to empower them".

The emotion AI industry is expected to grow from $2.14 billion in 2024 to nearly $13 billion by 2032, reflecting the increasing demand for responsive, empathetic chatbots.

Privacy and Ethics in Emotionally Intelligent Chatbots

When it comes to emotion-detecting chatbots, protecting sensitive emotional data is a top priority. These systems don’t just process typical user interactions – they delve into deeply personal emotions like frustration, sadness, or anxiety. Under Canadian privacy law, even if users don’t explicitly provide emotional data, any emotional state inferred by AI about an identifiable individual is considered personal information. This classification means developers and organisations must adhere to strict legal standards to handle such sensitive data responsibly.

The stakes are especially high in areas like health and mental health, where emotional data is often particularly sensitive. Canadian regulators have drawn clear ethical boundaries, identifying certain uses of AI as unacceptable. For example, the Office of the Privacy Commissioner of Canada warns:

"The use of conversational bots to deliberately nudge individuals into divulging personal information (and, in particular, sensitive personal information) that they would not have otherwise [is a potential no-go zone]".

Additionally, organisations need to steer clear of profiling practices that could result in unfair or discriminatory outcomes.

User Data Privacy and Security

Developers must ensure they have a clear legal basis – either through express or implied consent – for collecting emotional data. This consent must be meaningful, not hidden within lengthy terms of service or obtained through deceptive practices. In highly sensitive contexts like healthcare, even explicit consent might not be enough, often requiring additional oversight and ethical reviews.

A key principle in handling emotional data is minimisation. Developers should rely on anonymised or de-identified data wherever possible and implement strict data retention policies to ensure sensitive information is either destroyed or anonymised promptly. Quebec’s Law 25 adds another layer, requiring private organisations to notify individuals when decisions are made solely by automated systems and to explain the factors influencing those decisions.

Given the sensitivity of emotional data, robust security measures are essential. Chatbots face unique threats, such as prompt injection (tricking the system with crafted text), model inversion (extracting training data), and jailbreaking (manipulating the system to bypass safety rules). Privacy Impact Assessments (PIAs) and Algorithmic Impact Assessments (AIAs) can help identify these risks early. Additionally, adversarial testing, like "red team" exercises, can uncover vulnerabilities, such as the chatbot producing harmful content or exposing internal configurations. These practices are critical for maintaining transparency and earning user trust.

Transparency and Trust

Users have the right to know when they’re interacting with AI and what emotional data is being analysed. Organisations must clearly disclose what data is collected, how it’s used, and why it’s needed throughout the chatbot’s lifecycle. Any significant system outputs affecting users should also be clearly identified as AI-generated.

Transparency also involves acknowledging limitations. Emotionally intelligent chatbots can misinterpret emotions or generate inaccurate responses, and users should be informed of these risks. As the Office of the Privacy Commissioner of Canada states:

"Accountability for decisions rests with the organization, and not with any kind of automated system used to support the decision-making process".

To uphold this accountability, organisations must provide users with mechanisms to access, correct, or challenge decisions made by AI based on their emotional data. For instance, the mental health app Woebot, approved by the FDA in May 2025, uses Cognitive Behavioural Therapy (CBT) techniques to support users while adhering to ethical guidelines, ensuring it doesn’t replace human care and maintains transparency. Similarly, as of March 2026, Replika allows users to delete chat histories and adjust privacy settings, limiting data used for training.

Protecting vulnerable groups is another essential aspect of building trust. For example, children may not fully grasp the long-term consequences of data collection, so stronger privacy protections are necessary. Developers must also examine training datasets to avoid perpetuating biases related to race, gender, or disability. With 80% of consumers more likely to support brands that show empathy and understanding, prioritising ethical data practices not only ensures legal compliance but also strengthens user relationships. By safeguarding privacy, emotionally intelligent chatbots can create the trust and connection they aim to foster with their users.

Conclusion

Emotionally intelligent chatbots are reshaping how businesses engage with their customers. By understanding tone, sentiment, and emotional cues, these advanced systems create trust and build stronger connections – something traditional chatbots struggle to achieve. In fact, 73% of customers remain loyal to brands that offer empathy-driven support, and customer-centric industries report 20–30% higher satisfaction scores when emotional awareness is part of the interaction. With the emotion AI market expected to grow from CA$2.14 billion in 2024 to nearly CA$13 billion by 2032, companies adopting this technology now are setting themselves up for long-term success.

The benefits go beyond customer loyalty. Businesses leveraging emotionally intelligent chatbots see significant operational gains. For instance, resolution speeds have improved by 25%, and ticket handling costs have dropped from around CA$25 per ticket to just CA$2. These bots work around the clock, managing thousands of conversations simultaneously without additional staffing. When complex or sensitive issues arise, they can seamlessly escalate to human agents. A client testimonial highlights this value:

"Digital Fractal’s team has been great to work with, ensuring quality. The team’s support after the development stage is unmatched; they are quick to react at such a critical time." – James M, CEO

Digital Fractal Technologies Inc takes these capabilities to the next level. With over two decades of experience, their team includes experts with doctorates in AI and big data, employing advanced techniques like Retrieval-Augmented Generation (RAG) and fine-tuning models to deliver chatbots tailored to specific business needs. Whether you’re in construction, energy, field services, or the public sector, Digital Fractal offers comprehensive support – from AI Readiness Audits to 24/7 post-deployment maintenance – often achieving results in as little as 90 days.

A well-thought-out strategy is key. Starting with an AI Readiness Audit helps identify workflows with the highest impact. By integrating AI into existing systems, businesses can improve customer satisfaction by 30% and reduce support costs by 25%. These results demonstrate that emotionally intelligent chatbots are more than just a tech upgrade – they’re a strategic investment in building stronger customer relationships and achieving operational excellence.

FAQs

How can a chatbot detect emotion accurately?

Chatbots can pick up on emotions by using a mix of advanced natural language processing (NLP) and affective computing. Tools like transformer-based models – think GPT or BERT – are designed to analyse linguistic cues, tone, and the overall context of a conversation. This allows them to interpret emotions almost instantly.

Some systems take it a step further with multi-modal analysis. This means they can process additional inputs like vocal tone or even facial expressions (when available). By combining these different types of data, the chatbot creates a more detailed emotional profile. The result? Responses that feel more natural and empathetic, which can boost user engagement and leave people feeling more satisfied with their experience.

When should a chatbot escalate to a human agent?

When a chatbot faces situations it can’t handle or encounters specific triggers, it should escalate to a human agent. These triggers often include dealing with sensitive or complex issues like billing disputes or security matters, low AI confidence levels (e.g., below 40%), or when users show frustration or directly ask for human help. Smooth escalation involves quick handoffs and maintaining the conversation’s context, ensuring the user experience remains seamless and positive.

How do Canadian privacy laws apply to inferred emotions?

Canadian privacy laws, such as PIPEDA (Personal Information Protection and Electronic Documents Act), don’t yet include specific regulations to safeguard inferred emotions or emotion data, especially when it comes to children. This gap highlights the importance of managing emotional AI with care to tackle privacy concerns and reduce potential risks.